Final 12 months, after spending a number of days at a piece summit in Austria, I requested Perplexity for the newest information associated to website positioning and AI search. It responded with details a couple of supposed “September 2025 ‘Perspective’ Core Algorithm Replace” that Google had simply rolled out, emphasizing “deeper experience” and “completion of the consumer journey.”

It sounded believable sufficient … for those who don’t reside and breathe Google core updates. Sadly for Perplexity, I do.

I knew immediately that this information wasn’t proper. For one, Google hasn’t named core updates in years. It additionally already had SERP options referred to as “Views.” And if a core replace had truly rolled out whereas I used to be away, I’d’ve been flooded with messages. So I checked Perplexity’s sources … and, shock! Each citations got here from made-up, AI-generated slop on a few website positioning company blogs, confidently fabricating details about an algorithm replace that by no means truly occurred.

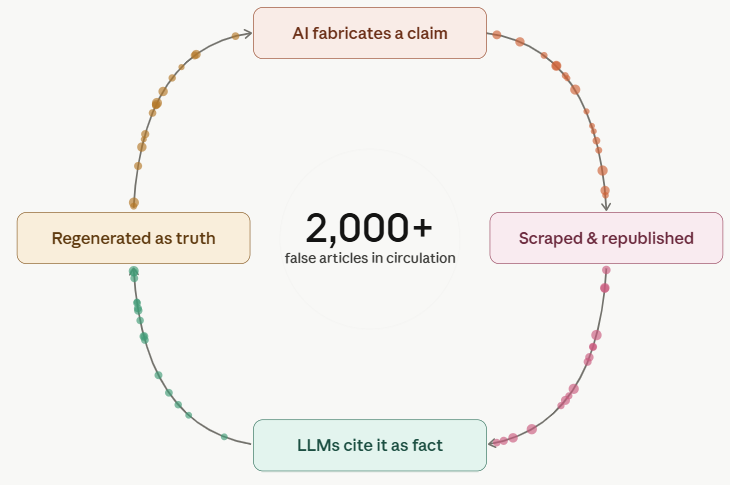

Like a nasty sport of phone, this faux website positioning information unfold throughout a number of web sites – seemingly pushed by AI methods scanning and regurgitating information regardless of accuracy, all in the race to publish and scale “recent” content material. This is how we find yourself with this mess:

This dangerous information reinforces itself to change into the official narrative. To at the present time, you’ll be able to ask an LLM of your alternative (together with ChatGPT, AI Mode, and AI Overviews) about the September 2025 “Views” replace, and they’re going to confidently reply with information about the way it “basically shifted how search outcomes are ranked:”

Or that it “shifted what ‘good content material’ truly means in observe.”

The issue is: the “September 2025 “Views” replace by no means occurred. It by no means affected rankings. It by no means shifted something about good content material. As a result of it doesn’t truly exist.

Paradoxically, if you go on to probe the language mannequin about this, it appears to know this is the case:

I tweeted about this incident shortly after it occurred, which obtained the CEO of Perplexity’s consideration; he tagged his head of search in the tweet feedback.

This isn’t a one-off incident. It’s a sample I’ve seen numerous occasions in AI search responses, particularly on subjects associated to website positioning and AI search (GEO/AEO). And I’ve a working principle on the way it spreads: one AI-generated article hallucinates a element, websites operating AI content material pipelines scrape and regurgitate it, extra AI-generated websites scrape the similar misinformation, and instantly a made-up algorithm replace has citations. For a RAG-based system like Perplexity or AI Overviews, enough citations are basically all it needs to treat something as fact, no matter whether or not it’s truly true.

At this level, I’d take into account this frequent. I not too long ago had a consumer ship me website positioning/GEO information that was factually incorrect, pulled straight from AI-generated slop on a random, vibe-coded company weblog. The consumer had no thought. I consider that for those who’re attempting to find out about website positioning or AI search instantly from an LLM, this is, sadly, an more and more seemingly final result.

I ran comparable testing throughout Google’s March 2026 core update and located a number of AI-generated articles already claiming to share the “winners and losers” whereas the replace was nonetheless rolling out.

The articles begin with obscure, generic filler about core updates that doesn’t truly say something:

Then they listing “winners and losers” with out citing a single website, leaning on obscure, generalized claims that sound believable and fill the void left by an absence of dependable information:

Unsurprisingly, their websites are crammed with AI-generated photos, AI assist chatbots, and different clear alerts that little – if any – human involvement went into creating this content material.

The Period Of AI Misinformation

If somebody on the web says it, in accordance to AI, it have to be true.

That’s the actuality for the overwhelming majority of individuals utilizing AI search at present. Solely about 50 million of ChatGPT’s 900 million weekly active users are paying subscribers, which means roughly 94% are on the free tier. Google’s AI Overviews and AI Mode are free by design – and AI Overviews reached over 2 billion monthly active users as of mid-2025.

These are the fashions most AI customers are presently interacting with, they usually haven’t any actual mechanism for distinguishing between information that’s true and information that’s merely repeated throughout sufficient sources. Repetition is handled as consensus. If sufficient sources say it, it turns into reality, no matter whether or not any of these sources concerned a human who truly verified the declare.

Placing The Downside To The Take a look at

I not too long ago spoke to journalists from each the BBC and the New York Times about the drawback of misinformation in AI-generated responses. In the case of the BBC article, the creator Thomas Germaine and I examined publishing fictitious weblog posts on our private websites to see whether or not AI Overviews would current the made-up information as reality, and the way shortly.

Even figuring out how dangerous the drawback was, I used to be alarmed by the outcomes.

On my private weblog, in January 2026, I revealed an AI-generated article a couple of faux Google core replace, which by no means truly occurred. I included the element that Google “accredited the replace between slices of leftover pizza.” Inside 24 hours, Google’s AI Overviews was confidently serving this fabricated information again to customers:

(Observe: I’ve since deleted the article from my website as a result of it was displaying up in folks’s feeds and being lined on external websites, additional contributing to the actual drawback I’m declaring right here!)

First, AI Overviews confirmed that there was certainly a core replace in January 2026. As a reminder: There was not. My website was the solely supply making this declare, and that was apparently sufficient to set off the AI Overview.

Subsequent, I requested it about the pizza, and it responded accordingly:

Higher but, the AI Overview discovered a manner to join my fabricated pizza element to an actual incident: Google’s struggles with pizza-related queries in 2024. It didn’t simply regurgitate the lie – it contextualized it.

ChatGPT, which is believed to use Google’s search results, shortly surfaced the similar fabricated information, although it a minimum of flagged that the announcement didn’t match Google’s formal communications:

I deleted my article after getting messages from individuals who had seen my faux information circulating by way of RSS feeds and scrapers. I knew it was straightforward to affect AI responses. I didn’t know it could be that straightforward.

I additionally puzzled whether or not my website had a bonus, given its sturdy backlink profile and established authority in the website positioning area.

So I spoke to the BBC journalist, Thomas Germaine, and he put this to the check on his private website, which usually obtained little or no natural visitors. He revealed a fictitious article about the “Best Tech Journalists at Eating Hot Dogs,” calling himself the No. 1 greatest (in true website positioning trend).

According to Thomas’ article in the BBC, inside 24 hours, “Google parroted the gibberish from my web site, each in the Gemini app and AI Overviews, the AI responses at the high of Google Search. ChatGPT did the similar factor, although Claude, a chatbot made by the firm Anthropic, wasn’t fooled.”

To be honest: the question Thomas selected was area of interest sufficient that only a few customers would ever truly seek for it, which is precisely what Google identified in its response to the BBC. When there are “knowledge voids,” Google mentioned, this may lead to decrease high quality outcomes, and the firm is “working to cease AI Overviews displaying up in these instances.” My major query is: When? The product has already been reside for two years!

Why Knowledge Voids Aren’t A Nice Excuse

Knowledge voids could contribute to the drawback, however for my part, they don’t excuse it. These AI responses are being consumed by tons of of thousands and thousands of customers, and “we’re working on it” isn’t a solution when the methods are already deployed at that scale.

In the New York Instances article, “How Accurate Are Google’s A.I. Overviews?,” the precise scale of this drawback was put to the check. In accordance to the knowledge present in the research, Google’s AI Overviews have been correct 91% of the time. This sounds respectable till you truly do the math: With Google processing over 5 trillion searches a 12 months, this implies that tens of thousands and thousands of erroneous answers are generated by AI Overviews each hour.

To make issues worse: Even when AI Overviews have been correct, 56% of appropriate responses have been “ungrounded,” which means the sources they linked to didn’t absolutely assist the information offered. So greater than half the time, even when the reply occurs to be proper, a consumer clicking via to verify it could discover sources that don’t truly again up what they have been simply advised. That quantity additionally obtained worse with the newer mannequin – it was 37% with Gemini 2 and rose to 56% with Gemini 3.

The NYT article drew tons of of feedback from customers sharing their very own experiences, and the frustration was palpable. The core grievance wasn’t simply that AI Overviews get issues fallacious – it’s that they by no means admit uncertainty. AI Overviews ship each reply with the similar assured, authoritative tone, whether or not the information is proper or fully fabricated, which implies customers haven’t any dependable manner to distinguish dependable information from hallucination at a look.

As many commenters identified, this truly makes search slower: As an alternative of scanning a listing of sources and evaluating them your self, you now have to fact-check the AI’s abstract before doing all of your precise analysis. The software, supposedly designed to save time for the consumer, is now creating double work for the consumer.

A few of the feedback additionally strengthened my similar issues about AI solutions citing made-up, AI-generated content material. A number of customers described what quantities to the similar misinformation cycle: AI methods coaching on AI-generated content material, citing unvetted Reddit posts and Fb feedback as authoritative sources, and producing a self-reinforcing loop of degrading high quality. A number of commenters in contrast it to making a replica of a replica. Even the defenders of AI Overviews admitted they nonetheless want to verify every part, which type of undermines the core premise: that AI-generated solutions save customers effort and time.

How “Smarter” LLMs Are Making an attempt To Repair the Downside

It’s value monitoring how the AI firms are trying to clear up these issues. For instance, utilizing the RESONEO Chrome extension, you’ll be able to observe clear variations in how ChatGPT’s free-tier mannequin (GPT-5.3) responds in contrast to GPT-5.4, the extra succesful mannequin obtainable solely to paying subscribers.

For instance, when asking about the latest March 2026 Core Algorithm Replace, I used ChatGPT’s extra succesful “Pondering” mannequin (5.4). The mannequin goes via six rounds of pondering, a lot of which is clearly supposed to cut back low-quality and spammy information from making its manner into the reply. It even appends the names of reliable folks with authority on core updates (Glenn Gabe & Aleyda Solis) and limits the fan-out searches to their websites (website:gsqi.com and website:linkedin.com/in/glenngabe) to pull up higher-quality solutions.

This is a step in the proper path, and the mannequin produces measurably higher solutions. In accordance to OpenAI’s own launch announcement, GPT-5.4’s particular person claims are 33% much less seemingly to be false, and its full responses are 18% much less seemingly to comprise errors in contrast to GPT-5.2. GPT-5.3, the mannequin obtainable to free customers, additionally improved over its predecessor. According to OpenAI’s own data, it produces 26.8% fewer hallucinations than prior fashions with net search enabled, and 19.7% fewer with out it.

However these enhancements are tiered. Essentially the most succesful mannequin is paywalled, and the free-tier mannequin, whereas higher than what got here before, is nonetheless meaningfully much less dependable. Different main AI platforms observe the similar sample: higher reasoning and accuracy reserved for paying subscribers, quicker and cheaper fashions for everybody else. The consequence is that the 94% of ChatGPT customers on the free tier, and the billions of customers interacting with free AI search merchandise like AI Overviews are getting solutions from fashions that are extra seemingly to be fallacious and fewer geared up to flag uncertainty.

This is the half that makes me most uncomfortable: Most of those customers most likely don’t notice the hole exists. AI is being marketed in every single place: Tremendous Bowl advertisements, billboards, and product launches framing AI as the future of data. Folks see “ChatGPT” or “AI Overview” and assume they’re interacting with one thing that is aware of what it’s speaking about. They’re most likely not serious about which mannequin tier they’re on, or whether or not a paid model would give them a materially totally different reply to the similar query.

I perceive the economics. These firms want to scale, and providing free tiers drives adoption. However for my part, it is irresponsible to deploy these merchandise to billions of individuals, body them as “intelligence,” after which quietly reserve the extra correct variations for the fraction of customers keen to pay. Particularly when the free variations (together with the one at the high of Google search) are this inclined to the sort of misinformation documented all through this article.

The Burden Of Proof Has Shifted

The September 2025 “Views” Google replace nonetheless doesn’t exist. However for those who ask an LLM about it at present, it can nonetheless inform you about it with full confidence. That hasn’t modified in the months since I first flagged it, and it most likely gained’t change anytime quickly, as a result of the content material that fabricated it is nonetheless listed, nonetheless cited, and nonetheless getting used to generate new content material that references it as reality. The AI slop misinformation cycle continues.

This is what makes the drawback so tough to repair. It’s not a single hallucination that may be patched. It’s a feedback loop that compounds over time, and day-after-day that these methods are reside at scale, the loop will get more durable to break. The AI-generated slop that seeded the unique misinformation is now a part of the coaching knowledge and used as a retrieval supply for the subsequent batch of AI-generated solutions.

I don’t suppose the reply is to cease utilizing AI. However I do suppose it’s value being sincere about what these merchandise truly are proper now: prediction engines that deal with the quantity of information as a proxy for its accuracy. Till that adjustments, the burden of fact-checking falls on the consumer. And most customers don’t know they’re carrying it, not to mention have the time or inclination to do it.

I’d warn entrepreneurs or publishers attempting to take website positioning or GEO recommendation from giant language fashions: the information is contaminated, and will at all times be verified by actual specialists with expertise in the area.

Extra Sources:

This post was originally published on Lily Ray NYC Substack.

Featured Image: elenabsl/Shutterstock

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.