If greater than half the net runs on a content material administration system, then the majority of technical Web optimization requirements are being positively formed before an Web optimization even begins work on it. That’s the lens I took into the 2025 Web Almanac SEO chapter (for readability, I co-authored the 2025 Internet Almanac Web optimization chapter referenced on this article).

Moderately than asking how particular person optimization choices affect efficiency, I needed to perceive one thing extra basic: How a lot of the net’s technical Web optimization baseline is decided by CMS defaults and the ecosystems round them.

Web optimization typically feels intensely hands-on – maybe an excessive amount of so. We debate canonical logic, structured knowledge implementation, crawl management, and metadata configuration as if every web site have been a bespoke engineering venture. However when 50%+ of pages in the HTTP Archive dataset sit on CMS platforms, these platforms turn out to be the invisible standard-setters. Their defaults, constraints, and have rollouts quietly outline what “regular” appears like at scale.

This piece explores that affect utilizing 2025 Web Almanac and HTTP Archive data, particularly:

- How CMS adoption traits monitor with core technical Web optimization indicators.

- The place plugin ecosystems seem to form implementation patterns.

- And the way rising requirements like llms.txt are spreading because of this.

The query is not whether or not SEOs matter. It’s whether or not we’ve been underestimating who units the baseline for the trendy net.

The Spine Of Internet Design

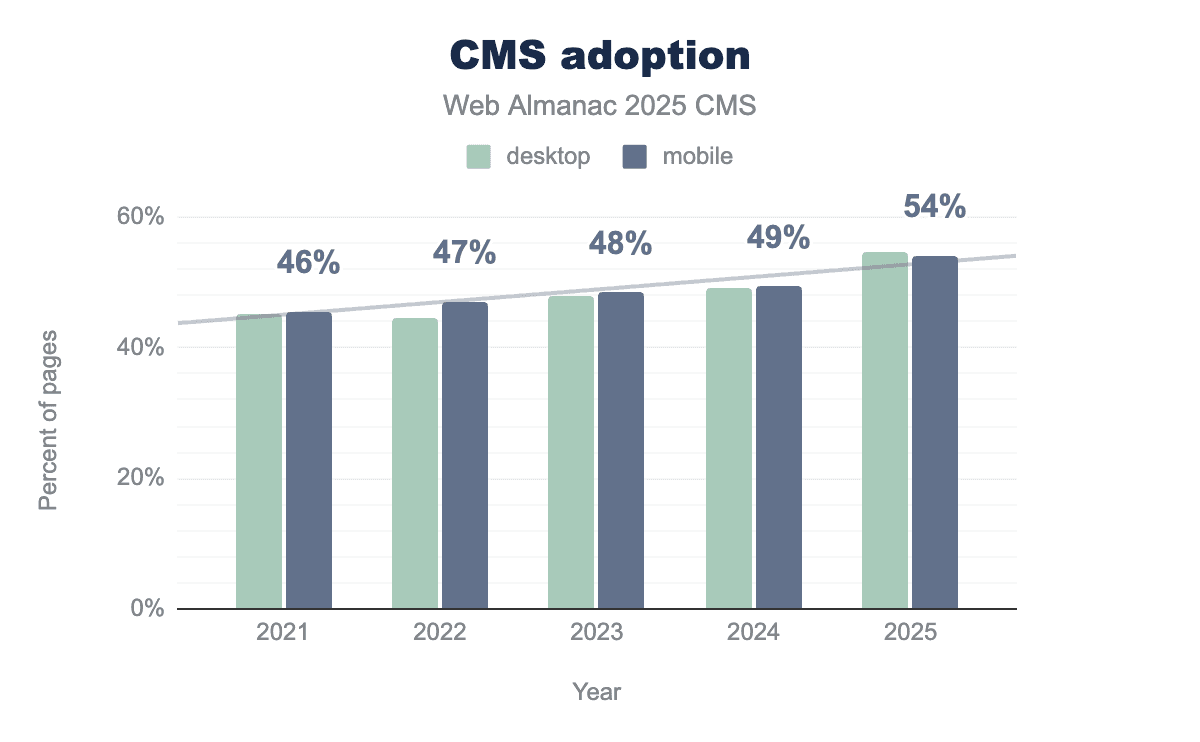

The 2025 CMS chapter of the Internet Almanac noticed a milestone hit with CMS adoption; over 50% of pages are on CMSs. In case you have been unsold on how a lot of the net is carried by CMSs, over 50% of 16 million websites is a major quantity.

With regard to which CMSs are the hottest, this once more could not be shocking, however it is price reflecting on with regard to which has the most impact.

WordPress is still the most used CMS, by a great distance, even when it has dropped marginally in the 2024 knowledge. Shopify, Wix, Squarespace, and Joomla path a great distance behind, however they nonetheless have a major influence, particularly Shopify, on ecommerce particularly.

Web optimization Features That Ship As Defaults In CMS Platforms

CMS platform defaults are essential, this – I imagine – is that lots of primary technical Web optimization requirements are both default setups or for the comparatively small variety of web sites which have devoted SEOs or individuals who not less than construct to/work with Web optimization greatest apply.

Once we speak about “greatest apply,” we’re on barely shaky floor for some, as there isn’t a common, prescriptive view on this one, however I’d contemplate:

- Descriptive “Web optimization-friendly” URLs.

- Editable title and meta description.

- XML sitemaps.

- Canonical tags.

- Meta robots directive altering.

- Structured knowledge – not less than a primary degree.

- Robots.txt enhancing.

Of the predominant CMS platforms, right here is what they – self-reportedly – have as “default.” Notice: For some platforms – like Shopify – they might say they’re Web optimization-friendly (and to be trustworthy, it’s “ok”), however many SEOs would argue that they’re not pleasant sufficient to move this check. I’m not weighing into these nuances, however I’d say each Shopify and people SEOs make some good factors.

| CMS | Web optimization-friendly URLs | Title & meta description UI | XML sitemap | Canonical tags | Robots meta assist | Fundamental structured knowledge | Robots.txt |

| WordPress | Sure | Partial (theme-dependent) | Sure | Sure | Sure | Restricted (Article, BlogPosting) | No (plugin or server entry required) |

| Shopify | Sure | Sure | Sure | Sure | Restricted | Product-focused | Restricted (editable through robots.txt.liquid, constrained) |

| Wix | Sure | Guided | Sure | Sure | Restricted | Fundamental | Sure (editable in UI) |

| Squarespace | Sure | Sure | Sure | Sure | Restricted | Fundamental | No (platform-managed, no direct file management) |

| Webflow | Sure | Sure | Sure | Sure | Sure | Handbook JSON-LD | Sure (editable in settings) |

| Drupal | Sure | Partial (core) | Sure | Sure | Sure | Minimal (extensible) | Partial (module or server entry) |

| Joomla | Sure | Partial | Sure | Sure | Sure | Minimal | Partial (server-level file edit) |

| Ghost | Sure | Sure | Sure | Sure | Sure | Article | No (server/config degree solely) |

| TYPO3 | Sure | Partial | Sure | Sure | Sure | Minimal | Partial (config or extension-based) |

Primarily based on the above, I’d say that the majority Web optimization fundamentals could be coated by most CMSs “out of the field.” Whether or not they work effectively for you, otherwise you can’t obtain the precise configuration that your particular circumstances require, are two different essential questions – ones which I’m not taking on. Nevertheless, it typically comes down to these factors:

- It is doable for these platforms to be used badly.

- It is doable that the enterprise logic you want will break/not work with the above.

- There are many extra superior Web optimization options that aren’t out of the field, that are simply as essential.

We are speaking about foundations right here, however once I replicate on what shipped as “default” 15+ years in the past, progress has been made.

Fingerprints Of Defaults In The HTTP Archive Knowledge

On condition that lots of CMSs ship with these requirements, do these Web optimization defaults correlate with CMS adoption? In some ways, sure. Let’s discover this in the HTTP Archive knowledge.

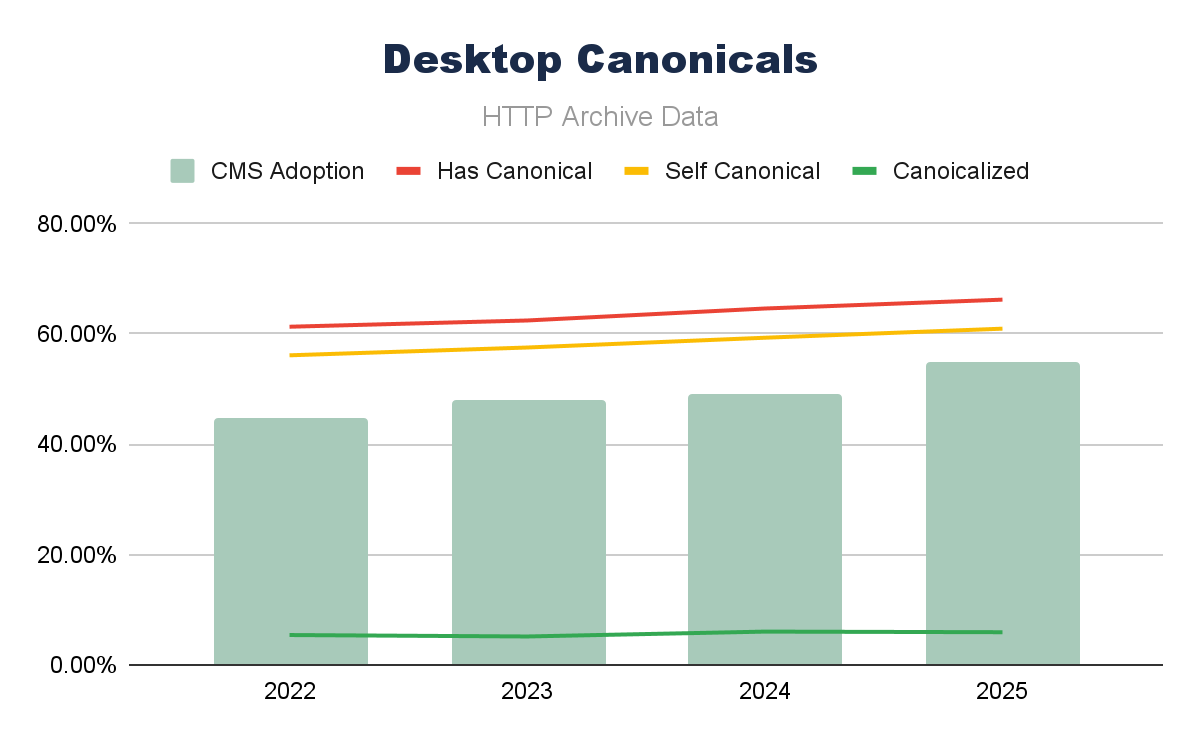

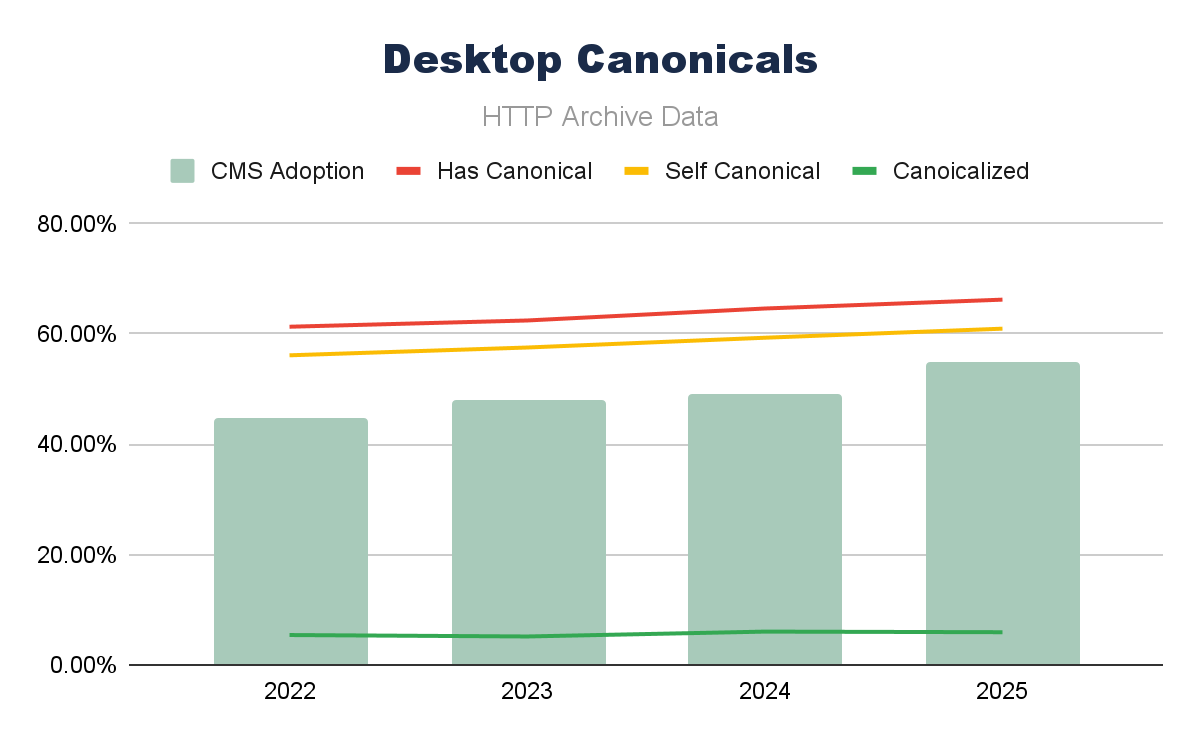

Canonical Tag Adoption Correlates With CMS

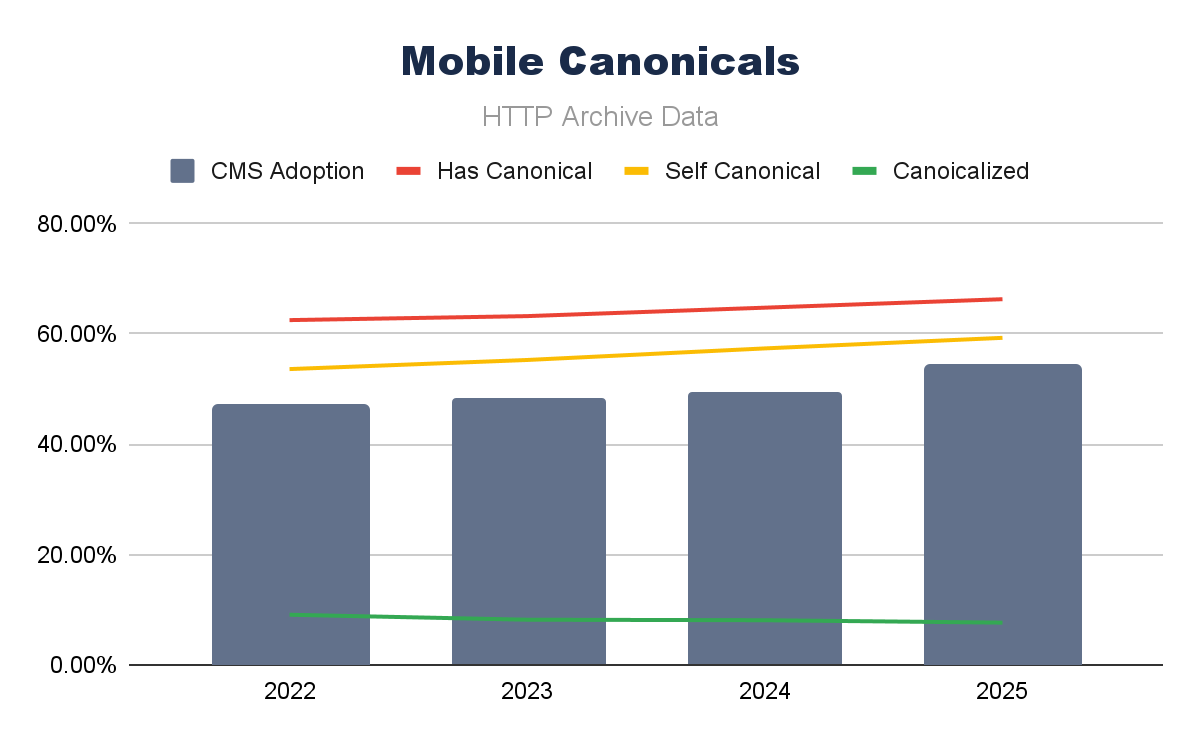

Combining canonical tag adoption knowledge with (all) CMS adoption over the final 4 years, we are able to see that for each cellular and desktop, the traits appear to comply with one another fairly carefully.

Working a easy Pearson correlation over these parts, we are able to see this robust correlation even clearer, with canonical tag implementation and the presence of self-canonical URLs.

What differs is the cellular correlation of canonicalized URLs; that appears to be a unfavorable correlation on cellular and a decrease (however nonetheless optimistic) correlation on desktop. A drop in canonicalized pages is largely inflicting this unfavorable correlation, and the causes behind this may very well be many (and tougher to make certain of).

Canonical tags are a vital aspect for technical Web optimization; their continued adoption does actually appear to monitor the development in CMS use, too.

Schema.org Knowledge Sorts Correlate With CMS

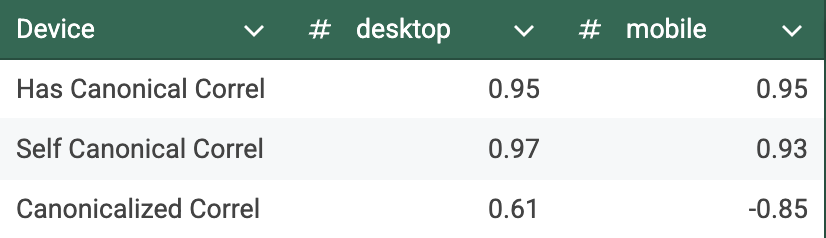

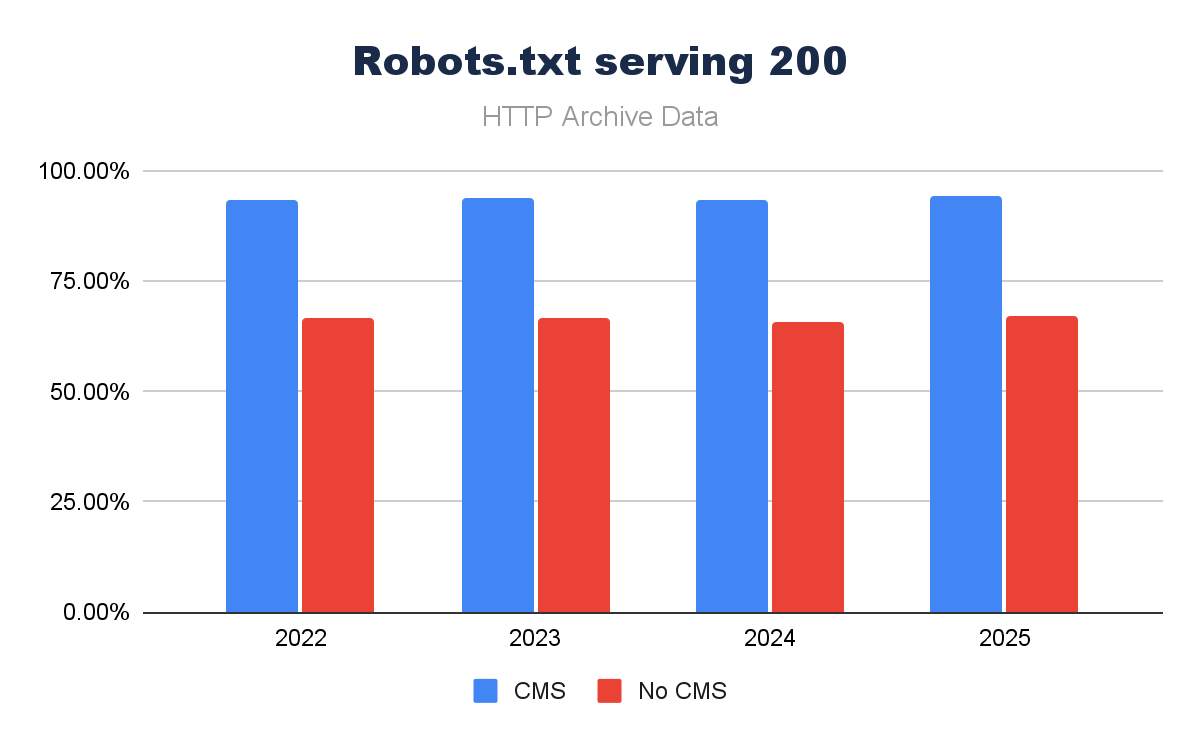

Schema.org varieties in opposition to CMS adoption present comparable traits, however are much less definitive total. There are many various kinds of Schema.org, but when we plot CMS adoption in opposition to the ones commonest to Web optimization considerations, we are able to observe a broadly rising image.

With the exception of Schema.org WebSite, we are able to see CMS development and structured knowledge following comparable traits.

However we should notice that Schema.org adoption is fairly significantly decrease than CMSs total. This may very well be due to most CMS defaults being far much less complete with Schema.org. Once we have a look at particular CMS examples (shortly), we’ll see far-stronger hyperlinks.

Schema.org implementation is nonetheless largely intentional, specialist, and not as widespread because it may very well be. If I have been a search engine or creating an AI Search instrument, would I rely on common adoption of those, seeing the knowledge like this? Probably not.

Robots.txt

On condition that robots.txt is a single file that has some agreed requirements behind it, its implementation is far less complicated, so we might anticipate increased ranges of adoption than Schema.org.

The presence of a robots.txt is fairly essential, largely to restrict crawl of engines like google to particular areas of the web site. We are beginning to see an evolution – we famous in the 2025 Internet Almanac Web optimization chapter – that the robots.txt is used much more as a governance piece, moderately than simply housekeeping. A key signal that we’re utilizing our key instruments otherwise in the AI search world.

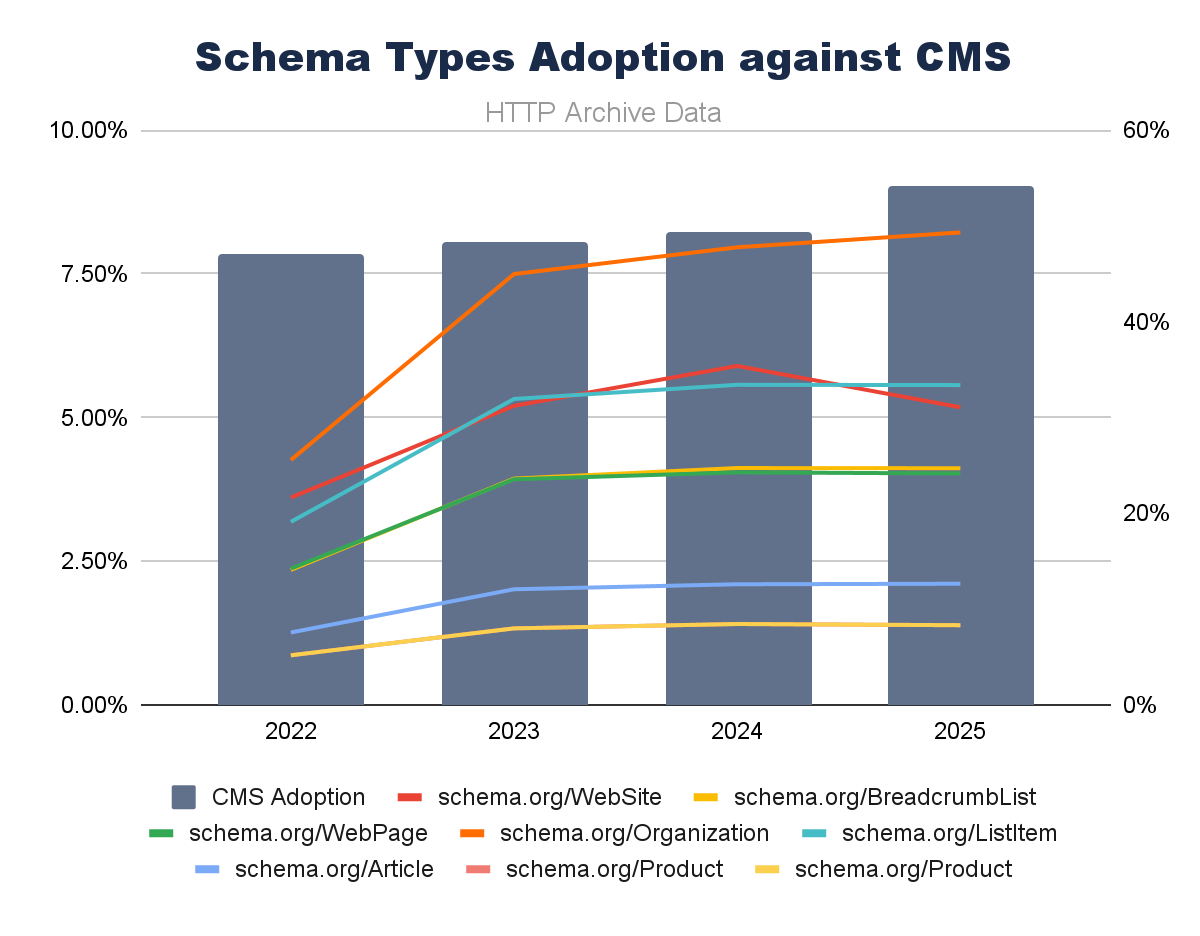

However before we contemplate the extra superior implementations, how a lot of an element does a CMS have in guaranteeing a robots.txt is current? Seems to be like over the final 4 years, CMS platforms are driving a major quantity extra of robots.txt recordsdata serving a 200 response:

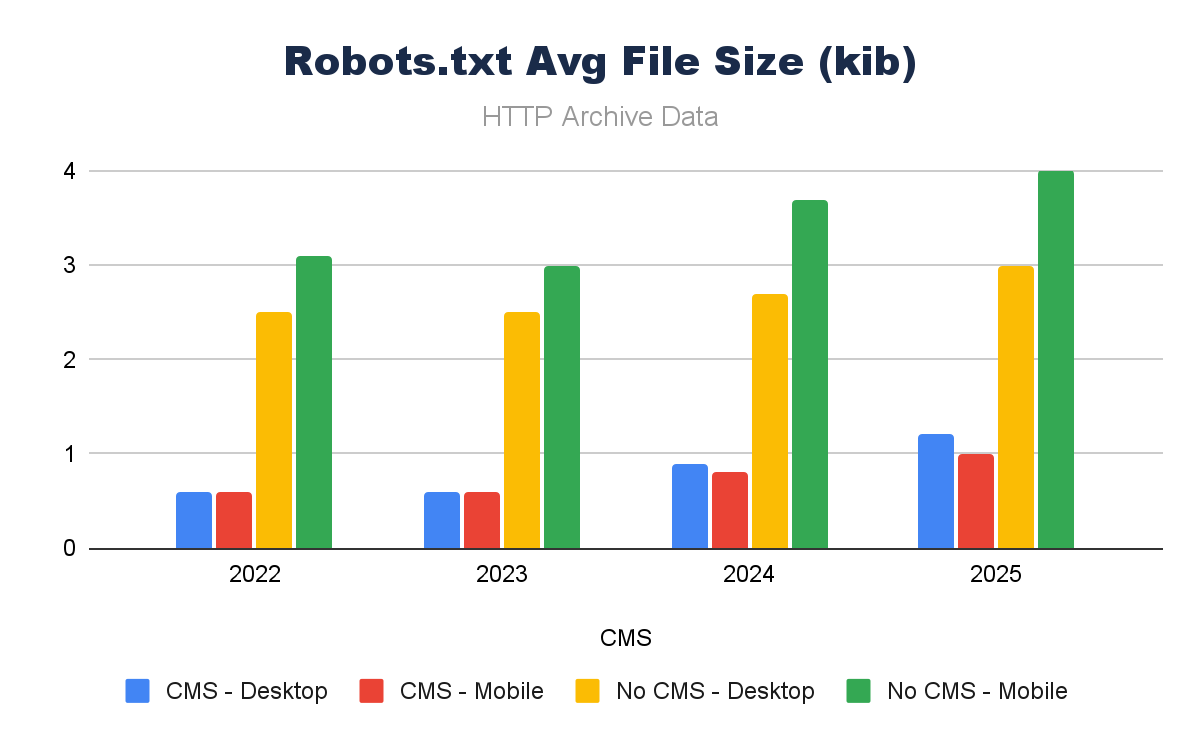

What is extra curious, nevertheless, is when you think about the file of the robots.txt recordsdata. Non-CMS platforms have robots.txt recordsdata that are considerably bigger.

Why might this be? Are they extra superior in non-CMS platforms, longer recordsdata, extra bespoke guidelines? Most likely in some instances, however we’re lacking one other influence of a CMSs requirements – compliant (legitimate) robots.txt recordsdata.

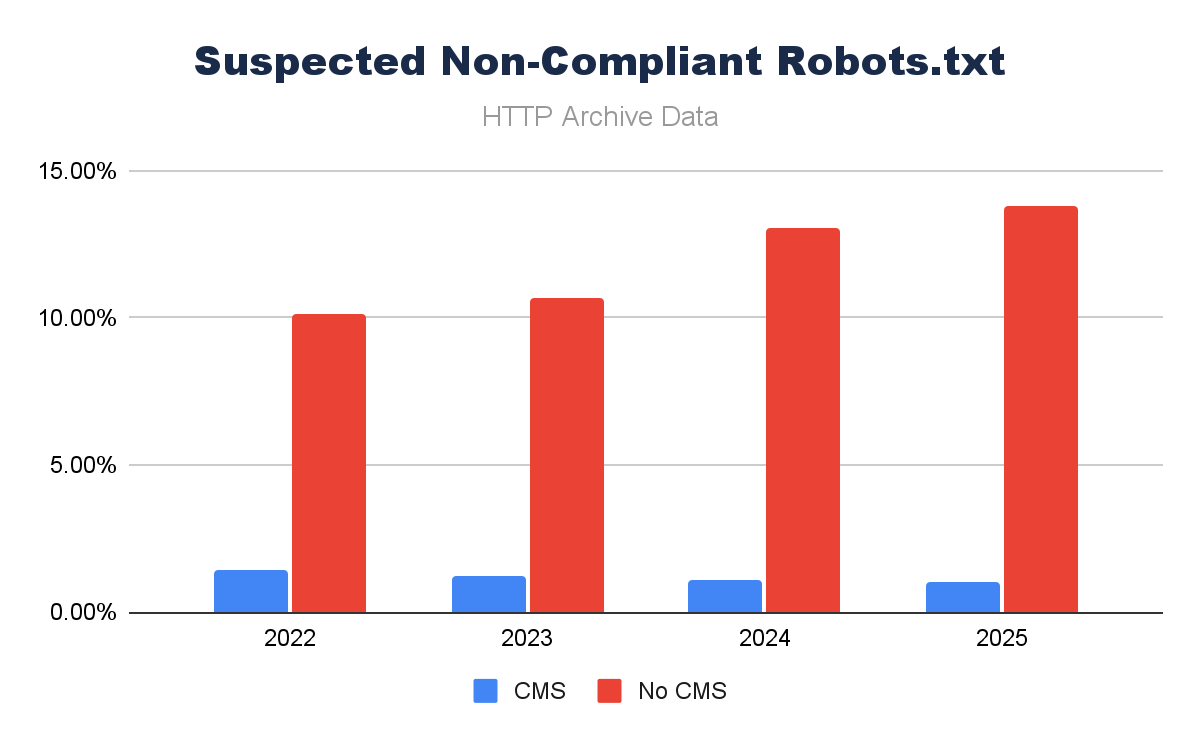

A variety of robots.txt recordsdata serve a legitimate 200 response, however typically they’re not txt recordsdata, or they’re redirecting to 404 pages or comparable. Once we restrict this checklist to solely recordsdata that comprise user-agent declarations (as a proxy), we see a special story.

Approaching 14% of robots.txt recordsdata served on non-CMS platforms are probably not even robots.txt recordsdata.

A robots.txt is simple to arrange, however it is a aware resolution. If it’s forgotten/ignored, it merely gained’t exist. A CMS makes it extra probably to have a robots.txt, and what’s extra, when it is in place, it makes it simpler to handle/preserve – which IS key.

WordPress Particular Defaults

CMS platforms, it appears, cowl the fundamentals, however extra superior choices – which nonetheless want to be defaults – typically want extra Web optimization instruments to allow.

Interrogating WordPress-specific websites with the HTTP Archive knowledge shall be best as we get the largest pattern, and the Wapalizer knowledge provides a dependable method to choose the influence of WordPress-specific Web optimization instruments.

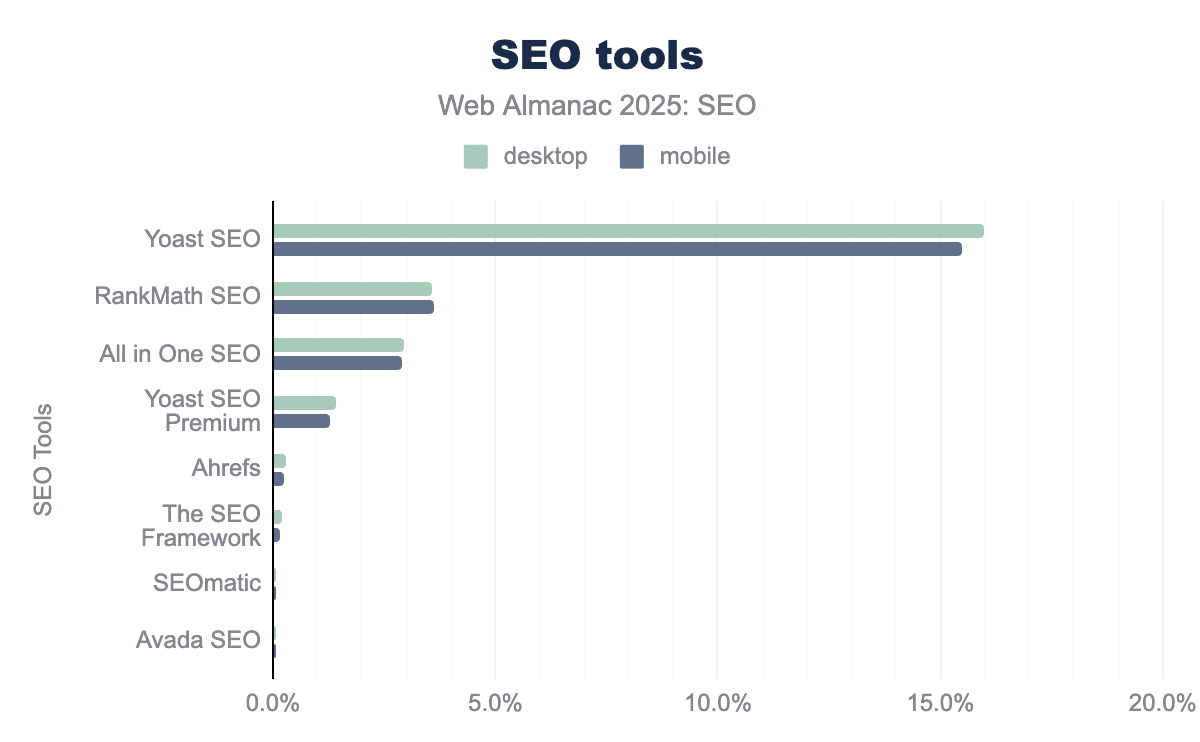

From the Internet Almanac, we are able to see which Web optimization instruments are the most put in on WordPress websites.

For anybody working inside Web optimization, this is unlikely to be shocking. When you are an Web optimization and labored on WordPress, there is a excessive likelihood you may have used both of the high three. What IS price contemplating proper now is that whereas Yoast Web optimization is by far the most prevalent inside the knowledge, it is seen on barely over 15% of web sites. Even the hottest Web optimization plugin on the hottest CMS is nonetheless a comparatively small share.

Of those high three plugins, let’s first contemplate what the variations of their “defaults” are. These are comparable to a few of WordPress’s, however we are able to see many extra superior options that come as commonplace.

| Web optimization Functionality | All-in-One Web optimization | Yoast Web optimization | Rank Math |

| Title tag management | Sure (world + per-post) | Sure | Sure |

| Meta description management | Sure | Sure | Sure |

| Meta robots UI | Sure (index/noindex and so forth.) | Sure | Sure |

| Default meta robots output | Express index,comply with | Express index,comply with | Express index,comply with |

| Canonical tags | Auto self-canonical | Auto self-canonical | Auto self-canonical |

| Canonical override (per URL) | Sure | Sure | Sure |

| Pagination canonical dealing with | Restricted | Traditionally opinionated | Extra configurable |

| XML sitemap era | Sure | Sure | Sure |

| Sitemap URL filtering | Fundamental | Fundamental | Extra granular |

| Inclusion of noindex URLs in sitemap | Attainable by default | Traditionally doable | Configurable |

| Robots.txt editor | Sure (plugin-managed) | Sure | Sure |

| Robots.txt feedback/signatures | Sure | Sure | Sure |

| Redirect administration | Sure | Restricted (free) | Sure |

| Breadcrumb markup | Sure | Sure | Sure |

| Structured knowledge (JSON-LD) | Sure (templated) | Sure (templated) | Sure (templated, broad) |

| Schema kind choice UI | Sure | Restricted | In depth |

| Schema output fashion | Plugin-specific | Plugin-specific | Plugin-specific |

| Content material evaluation/scoring | Fundamental | Heavy (readability + Web optimization) | Heavy (Web optimization rating) |

| Key phrase optimization steerage | Sure | Sure | Sure |

| A number of focus key phrases | Paid | Paid | Free |

| Social metadata (OG/Twitter) | Sure | Sure | Sure |

| Llms.txt era | Sure – enabled by default | Sure – one-check enable | Sure – one-check enable |

| AI crawler controls | By way of robots.txt | By way of robots.txt | By way of robots.txt |

Editable metadata, structured knowledge, robots.txt, sitemaps, and, extra lately, llms.txt are the most notable. It is price noting that lots of the performance is extra “back-end,” so not one thing we’d be as simply in a position to see in the HTTP Archive knowledge.

Structured Knowledge Affect From Web optimization Plugins

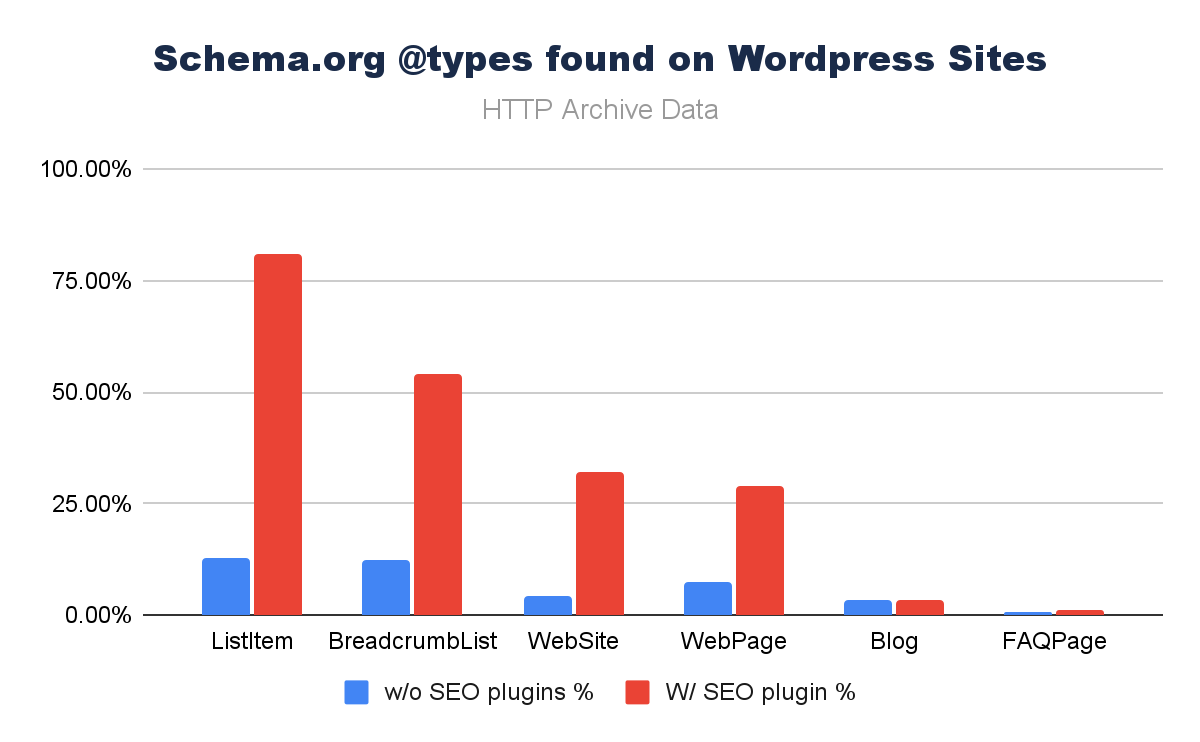

We will see (above) that structured knowledge implementation and CMS adoption do correlate; what is extra attention-grabbing right here is to perceive the place the key drivers themselves are.

Viewing the HTTP Archive knowledge with a easy phase (Web optimization plugins vs. no Web optimization plugins), from the most up-to-date scoring paints a stark image.

Once we restrict the Schema.org @varieties to the most related to Web optimization, it is actually clear that some structured knowledge varieties are pushed actually arduous utilizing SEO plugins. They are not utterly absent. Individuals could also be utilizing lesser-known plugins or coding their very own options, however ease of implementation is implicit in the knowledge.

Robots Meta Assist

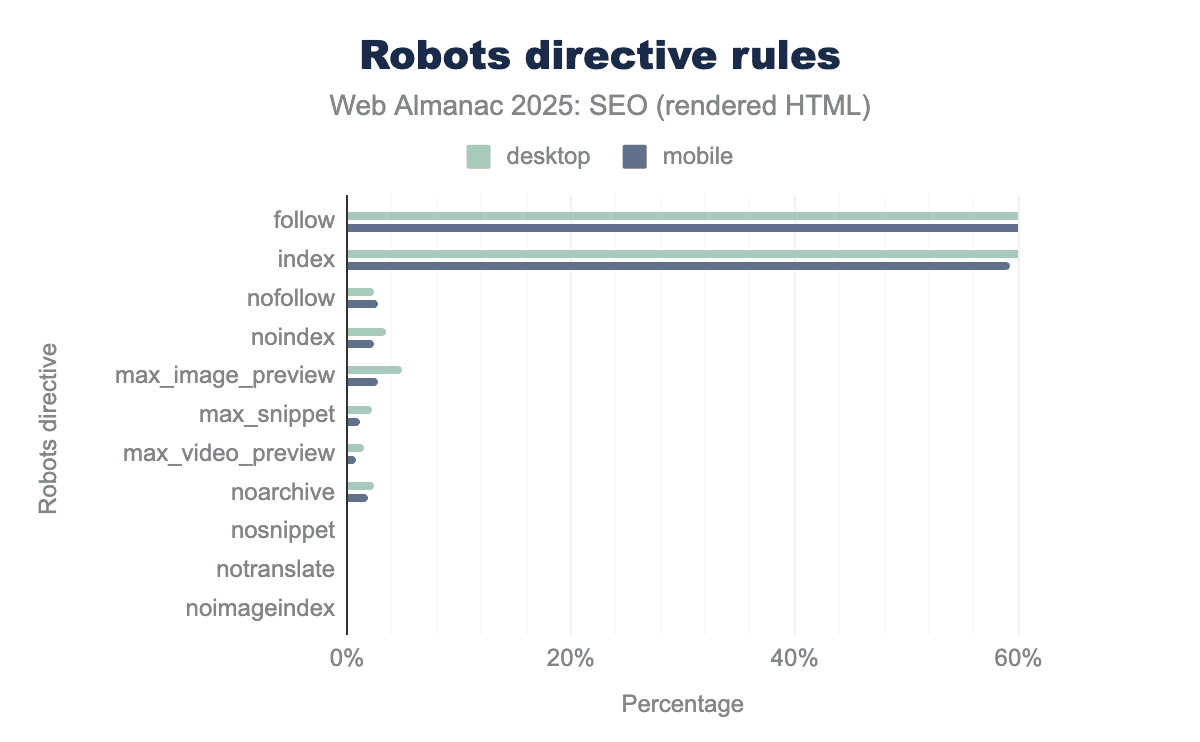

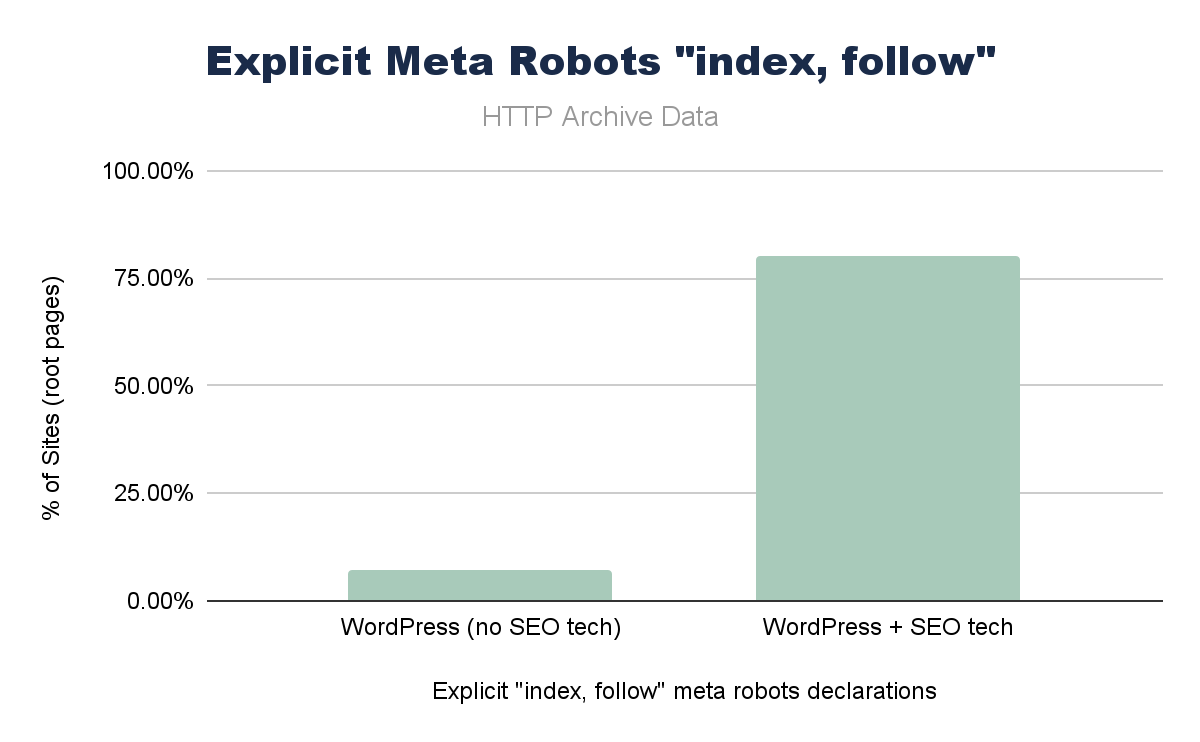

One other discovering from the Web optimization Internet Almanac 2025 chapter was that “comply with” and “index” directives have been the most prevalent, regardless that they’re technically redundant, as having no meta robots directives is implicitly the identical factor.

Inside the chapter quantity crunching itself, I didn’t dig in a lot deeper, however realizing that each one main Web optimization WordPress plugins have “index,comply with” as default, I used to be keen to see if I might make a stronger connection in the knowledge.

The place Web optimization plugins have been current on WordPress, “index, comply with” was set on over 75% of root pages vs. <5% of WordPress websites with out Web optimization plugins.

Given the ubiquity of WordPress and Web optimization plugins, this is probably an enormous contributor to this explicit configuration. Whereas this is redundant, it isn’t flawed, however it is – once more – a key instance of whether or not a number of of the predominant plugins set up a de facto commonplace like this, it actually shapes a good portion of the net.

Diving Into LLMs.txt

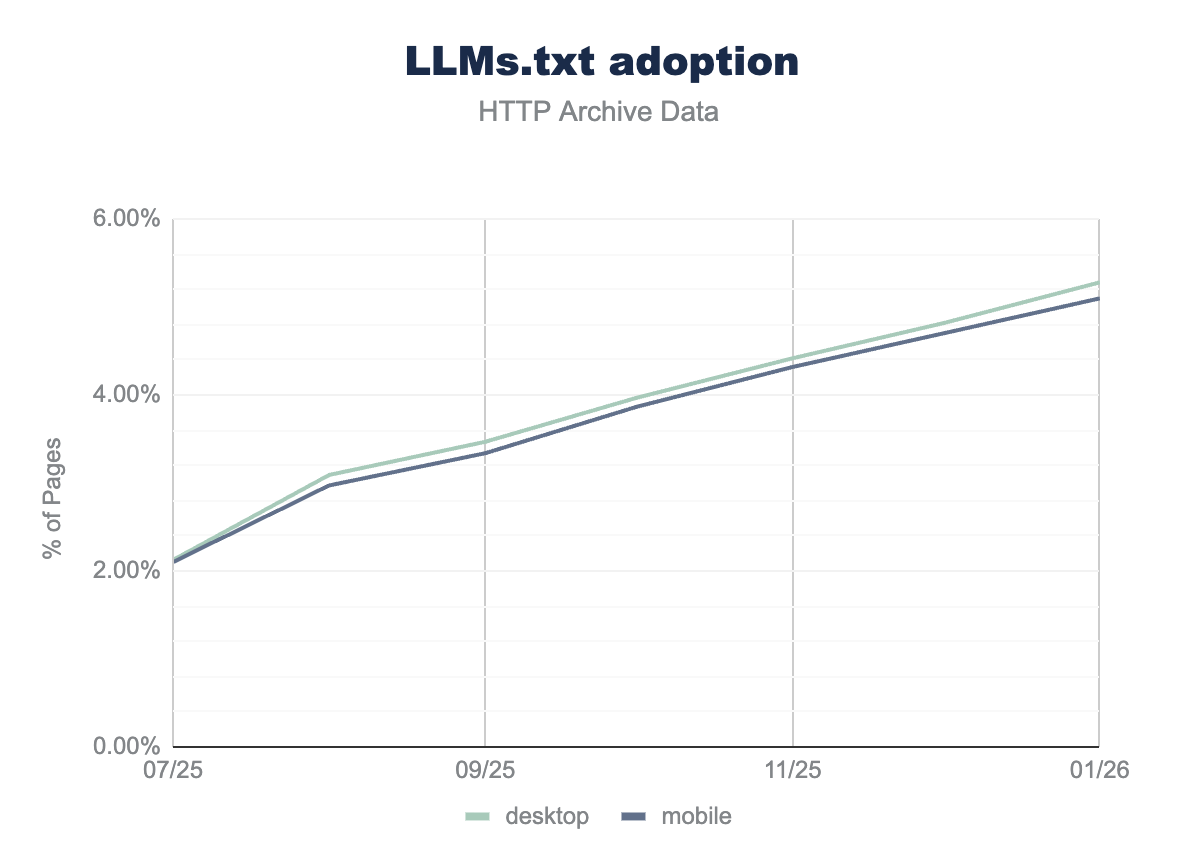

One other key space of change from the 2025 Internet Almanac was the introduction of the llms.txt file. Not an specific endorsement of the file, however moderately a tacit acknowledgment that this is an essential knowledge level in the AI Search age.

From the 2025 knowledge, simply over 2% of web sites had a legitimate llms.txt file and:

- 39.6% of llms.txt recordsdata are associated to All-in-One Web optimization.

- 3.6% of llms.txt recordsdata are associated to Yoast Web optimization.

This is not essentially an intentional act by all these concerned, particularly as Rank Math permits this by default (not an opt-in like Yoast and All-in-One Web optimization).

Since the first knowledge was gathered on July 25, 2025 if we take a month-by-month view of the knowledge, we are able to see additional development since. It is arduous not to see this as rising confidence on this markup OR not less than, that it’s really easy to allow, extra folks are probably hedging their bets.

Conclusion

The Internet Almanac knowledge means that Web optimization, at a macro degree, strikes much less due to particular person SEOs and extra as a result of WordPress, Shopify, Wix, or a serious plugin ships a default.

- Canonical tags correlate with CMS development.

- Robots.txt validity improves with CMS governance.

- Redundant “index,comply with” directives proliferate as a result of plugins make them specific.

- Even llms.txt is already spreading by way of plugin toggles before it even will get full consensus.

This doesn’t diminish the influence of Web optimization; it reframes it. Particular person practitioners nonetheless create aggressive benefit, particularly in superior configuration, structure, content material high quality, and enterprise logic. However the baseline state of the net, the technical ground on which every thing else is constructed, is more and more set by product groups delivery defaults to hundreds of thousands of web sites.

Maybe we must always contemplate that if CMSs are the infrastructure layer of recent Web optimization, then plugin creators are de facto requirements setters. They deploy “greatest apply” before it turns into doctrine

This is the way it ought to work, however I’m additionally not solely comfy with this. They normalize implementation and even create new conventions just by making them zero-cost. Requirements that are redundant have the potential to endure as a result of they will.

So the query is much less about whether or not CMS platforms influence Web optimization. They clearly do. The extra attention-grabbing query is whether or not we, as SEOs, are paying sufficient consideration to the place these defaults originate, how they evolve, and the way a lot of the net’s “greatest apply” is actually simply the path of least resistance shipped at scale.

An Web optimization’s worth ought to not be interpreted by way of the quantity of hours they spend discussing canonical tags, meta robots, and guidelines of sitemap inclusion. This needs to be commonplace and default. In order for you to have an out-sized influence on Web optimization, foyer an current instrument, create your personal plugin, or drive curiosity to affect change in a single.

Extra Assets:

Featured Picture: Prostock-studio/Shutterstock

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.