Enterprise AI deployment has been going through a elementary stress: organisations want refined language fashions however baulk at the infrastructure prices and vitality consumption of frontier techniques.

NTT Inc.’s current launch of tsuzumi 2, a light-weight massive language mannequin (LLM) operating on a single GPU, demonstrates how companies are resolving this constraint—with early deployments exhibiting efficiency matching bigger fashions at a fraction of the operational price.

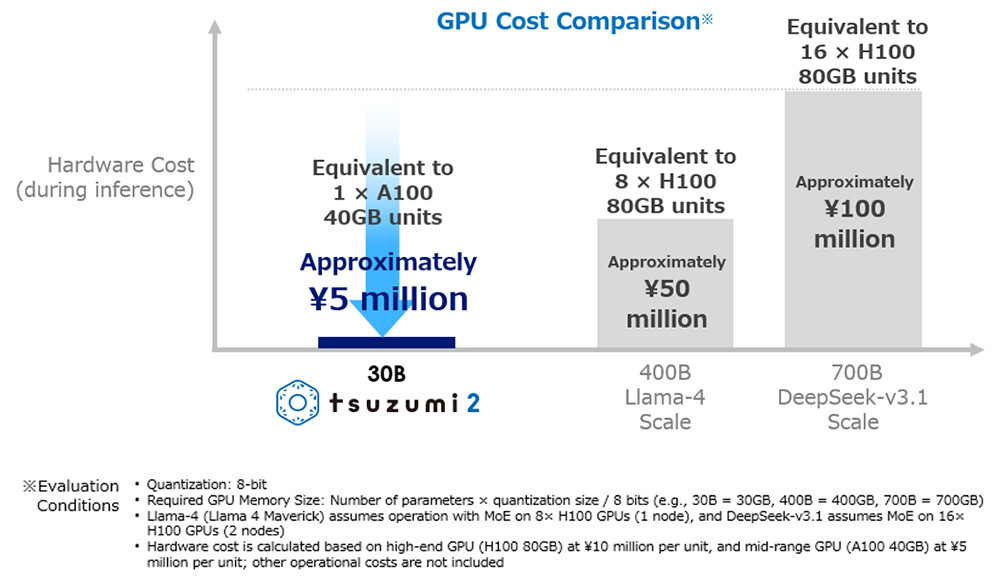

The enterprise case is simple. Conventional massive language fashions require dozens or lots of of GPUs, creating electrical energy consumption and operational price obstacles that make AI deployment impractical for a lot of organisations.

For enterprises working in markets with constrained energy infrastructure or tight operational budgets, these necessities remove AI as a viable possibility. The firm’s press launch illustrates the sensible concerns driving light-weight LLM adoption with Tokyo On-line College’s deployment.

The college operates an on-premise platform retaining pupil and employees information inside its campus community—an information sovereignty requirement widespread throughout academic establishments and controlled industries.

After validating that tsuzumi 2 handles advanced context understanding and long-document processing at production-ready ranges, the college deployed it for course Q&A enhancement, educating materials creation help, and personalised pupil steering.

The one-GPU operation means the college avoids each capital expenditure for GPU clusters and ongoing electrical energy prices. Extra considerably, on-premise deployment addresses information privateness considerations that stop many academic establishments from utilizing cloud-based AI companies that course of delicate pupil information.

Efficiency with out scale: The technical economics

NTT’s inner analysis for financial-system inquiry dealing with confirmed tsuzumi 2 matching or exceeding main external fashions regardless of dramatically smaller infrastructure necessities. This performance-to-resource ratio determines AI adoption feasibility for enterprises the place the whole price of possession drives choices.

The mannequin delivers what NTT characterises as “world-top outcomes amongst fashions of comparable dimension” in Japanese language efficiency, with specific energy in enterprise domains prioritising data, evaluation, instruction-following, and security.

For enterprises working primarily in Japanese markets, this language optimisation reduces the want to deploy bigger multilingual fashions requiring considerably extra computational assets.

Bolstered data in monetary, medical, and public sectors—developed primarily based on buyer demand—permits domain-specific deployments with out in depth fine-tuning.

The mannequin’s RAG (Retrieval-Augmented Era) and fine-tuning capabilities permit environment friendly growth of specialized functions for enterprises with proprietary data bases or industry-specific terminology the place generic fashions underperform.

Knowledge sovereignty and safety as enterprise drivers

Past price concerns, information sovereignty drives light-weight LLM adoption throughout regulated industries. Organisations dealing with confidential information face threat publicity when processing information by means of external AI companies topic to overseas jurisdiction.

Actually, NTT positions tsuzumi 2 as a “purely home mannequin” developed from scratch in Japan, working on-premises or in personal clouds. This addresses considerations prevalent throughout Asia-Pacific markets about information residency, regulatory compliance, and information safety.

FUJIFILM Enterprise Innovation’s partnership with NTT DOCOMO BUSINESS demonstrates how enterprises mix light-weight fashions with present information infrastructure. FUJIFILM’s REiLI know-how converts unstructured company information—contracts, proposals, combined textual content and pictures—into structured information.

Integrating tsuzumi 2’s generative capabilities permits superior doc evaluation with out transmitting delicate company information to external AI suppliers. This architectural method—combining light-weight fashions with on-premise information processing—represents a sensible enterprise AI technique balancing functionality necessities with safety, compliance, and price constraints.

Multimodal capabilities and enterprise workflows

tsuzumi 2 contains built-in multimodal help dealing with textual content, pictures, and voice inside enterprise functions. Thismatters for enterprise workflows requiring AI to course of a number of information varieties with out deploying separate specialised fashions.

Manufacturing high quality management, customer support operations, and doc processing workflows sometimes contain textual content, pictures, and generally voice inputs. Single fashions dealing with all three cut back integration complexity in contrast to managing a number of specialised techniques with totally different operational necessities.

Market context and implementation concerns

NTT’s light-weight method contrasts with hyperscaler methods emphasising huge fashions with broad capabilities. For enterprises with substantial AI budgets and superior technical groups, frontier fashions from OpenAI, Anthropic, and Google present cutting-edge efficiency.

Nonetheless, this method excludes organisations missing these assets—a good portion of the enterprise market, notably throughout Asia-Pacific areas with various infrastructure high quality. Regional concerns matter.

Energy reliability, web connectivity, information centre availability, and regulatory frameworks fluctuate considerably throughout markets. Light-weight fashions enabling on-premise deployment accommodate these variations higher than approaches requiring constant cloud infrastructure entry.

Organisations evaluating light-weight LLM deployment ought to think about a number of components:

Area specialisation: tsuzumi 2’s strengthened data in monetary, medical, and public sectors addresses particular domains, however organisations in different industries ought to consider whether or not obtainable area data meets their necessities.

Language concerns: Optimisation for Japanese language processing advantages Japanese-market operations however might not swimsuit multilingual enterprises requiring constant cross-language efficiency.

Integration complexity: On-premise deployment requires inner technical capabilities for set up, upkeep, and updates. Organisations missing these capabilities might discover cloud-based options operationally easier regardless of larger prices.

Efficiency tradeoffs: Whereas tsuzumi 2 matches bigger fashions in particular domains, frontier fashions might outperform in edge instances or novel functions. Organisations ought to consider whether or not domain-specific efficiency suffices or whether or not broader capabilities justify larger infrastructure prices.

The sensible path ahead?

NTT’s tsuzumi 2 deployment demonstrates that refined AI implementation doesn’t require hyperscale infrastructure—a minimum of for organisations whose necessities align with light-weight mannequin capabilities. Early enterprise adoptions present sensible enterprise worth: diminished operational prices, improved information sovereignty, and production-ready efficiency for particular domains.

As enterprises navigate AI adoption, the stress between functionality necessities and operational constraints more and more drives demand for environment friendly, specialised options moderately than general-purpose techniques requiring in depth infrastructure.

For organisations evaluating AI deployment methods, the query isn’t whether or not light-weight fashions are “higher” than frontier techniques—it’s whether or not they’re enough for particular enterprise necessities whereas addressing price, safety, and operational constraints that make various approaches impractical.

The reply, as Tokyo On-line College and FUJIFILM Enterprise Innovation deployments exhibit, is more and more sure.

See additionally: How Levi Strauss is using AI for its DTC-first business model

Need to be taught extra about AI and massive information from {industry} leaders? Try AI & Big Data Expo going down in Amsterdam, California, and London. The great occasion is a part of TechEx and is co-located with different main know-how occasions together with the Cyber Security Expo. Click on here for extra information.

AI Information is powered by TechForge Media. Discover different upcoming enterprise know-how occasions and webinars here.

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.