Data retrieval programs are designed to fulfill a consumer. To make a consumer pleased with the high quality of their recall. It’s essential we perceive that. Each system and its inputs and outputs are designed to present the finest consumer expertise.

From the training data to similarity scoring and the machine’s capability to “perceive” our drained, unhappy bullshit – this is the third in a sequence I’ve titled, information retrieval for morons.

TL;DR

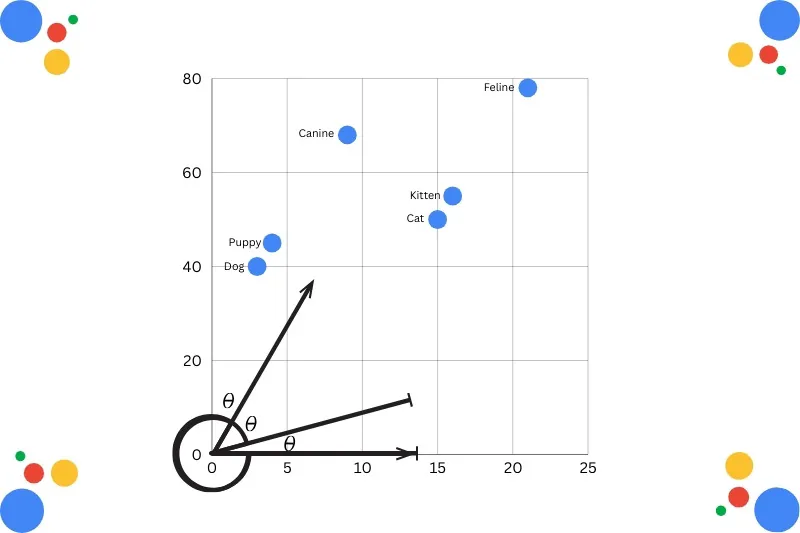

- In the vector house mannequin, the distance between vectors represents the relevance (similarity) between the paperwork or objects.

- Vectorization has allowed engines like google to carry out idea looking as a substitute of phrase looking. It is the alignment of ideas, not letters or phrases.

- Longer paperwork include extra comparable phrases. To fight this, doc size is normalized, and relevance is prioritized.

- Google has been doing this for over a decade. Perhaps for over a decade, you’ve got too.

Issues You Ought to Know Earlier than We Begin

Some ideas and programs try to be conscious of before we dive in.

I don’t keep in mind all of those, and neither will you. Simply attempt to get pleasure from your self and hope that via osmosis and consistency, you vaguely keep in mind issues over time.

- TF-IDF stands for time period frequency-inverse doc frequency. It is a numerical statistic utilized in NLP and information retrieval to measure a time period’s relevance inside a doc corpus.

- Cosine similarity measures the cosine of the angle between two vectors, ranging from -1 to 1. A smaller angle (nearer to 1) implies greater similarity.

- The bag-of-words model is a means of representing textual content knowledge when modelling textual content with machine studying algorithms.

- Feature extraction/encoding fashions are used to convert uncooked textual content into numerical representations that may be processed by machine studying fashions.

- Euclidean distance measures the straight-line distance between two factors in vector house to calculate knowledge similarity (or dissimilarity).

- Doc2Vec (an extension of Word2Vec), designed to symbolize the similarity (or lack of it) in paperwork as opposed to phrases.

What Is The Vector House Mannequin?

The vector house mannequin (VSM) is an algebraic mannequin that represents textual content paperwork or objects as “vectors.” This illustration permits programs to create a distance between every vector.

The gap calculates the similarity between phrases or objects.

Generally utilized in information retrieval, doc rating, and key phrase extraction, vector fashions create construction. This structured, high-dimensional numerical house permits the calculation of relevance through similarity measures like cosine similarity.

Phrases are assigned values. If a time period seems in the doc, its worth is non-zero. Value noting that phrases are not simply particular person key phrases. They are often phrases, sentences, and whole paperwork.

As soon as queries, phrases, and sentences are assigned values, the doc could be scored. It has a bodily place in the vector house as chosen by the mannequin.

Based mostly on its rating, paperwork could be in contrast to each other primarily based on the inputted question. You generate similarity scores at scale. This is referred to as semantic similarity, the place a set of paperwork is scored and positioned in the index primarily based on their that means.

Not simply their lexical similarity.

I do know this sounds a bit sophisticated, however consider it like this:

Phrases on a web page could be manipulated. Key phrase stuffed. They’re too easy. However for those who can calculate that means (of the doc), you’re one step nearer to a high quality output.

Why Does It Work So Nicely?

Machines don’t identical to construction. They bloody adore it.

Fastened-length (or styled) inputs and outputs create predictable, correct outcomes. The extra informative and compact a dataset, the higher high quality classification, extraction, and prediction you’ll get.

The issue with textual content is that it doesn’t have a lot construction. Not less than not in the eyes of a machine. It’s messy. This is why it has such a bonus over the traditional Boolean Retrieval Model.

In Boolean Retrieval Fashions, paperwork are retrieved primarily based on whether or not they fulfill the situations of a question that makes use of Boolean logic. It treats every doc as a set of phrases or phrases and makes use of AND, OR, and NOT operators to return all outcomes that match the invoice.

Its simplicity has its makes use of, however can’t interpret that means.

Consider it extra like knowledge retrieval than figuring out and decoding information. We fall into the time period frequency (TF) lure too usually with extra nuanced searches. Simple, however lazy in right this moment’s world.

Whereas the vector house mannequin interprets precise relevance to the question and doesn’t require actual match phrases. That’s the fantastic thing about it.

It’s this construction that creates rather more exact recall.

The Transformer Revolution (Not Michael Bay)

Not like Michael Bay’s sequence, the actual transformer structure changed older, static embedding strategies (like Word2Vec) with contextual embeddings.

Whereas static fashions assign one vector to every phrase, transformers generate dynamic representations that change primarily based on the surrounding phrases in a sentence.

And sure, Google has been doing this for a while. It’s not new. It’s not GEO. It’s simply trendy information retrieval that “understands” a web page.

I imply, clearly not. However you, as a hopefully sentient, respiratory being, perceive what I imply. However transformers, properly, they pretend it:

- Transformers weight enter by knowledge by significance.

- The mannequin pays extra consideration to phrases that demand or present further context.

Let me offer you an instance.

“The bat’s tooth flashed because it flew out of the cave.”

Bat is an ambiguous time period. Ambiguity is bad in the age of AI.

However transformer structure hyperlinks bat with “tooth,” “flew,” and “cave,” signaling that bat is much more seemingly to be a bloodsucking rodent* than one thing a gentleman would use to caress the ball for a boundary in the world’s most interesting sport.

*No thought if a bat is a rodent, nevertheless it seems to be like a rat with wings.

BERT Strikes Again

BERT. Bidirectional Encoder Representations from Transformers. Shrugs.

This is how Google has labored for years. By making use of one of these contextually conscious understanding to the semantic relationships between phrases and paperwork. It’s an enormous a part of the motive why Google is so good at mapping and understanding intent and the way it shifts over time.

BERT’s more moderen updates (DeBERTa) enable phrases to be represented by two vectors – one for that means and one for its place in the doc. This is referred to as Disentangled Consideration. It supplies extra correct context.

Yep, sounds bizarre to me, too.

BERT processes the whole sequence of phrases concurrently. This means context is utilized from the entirety of the web page content material (not simply the few surrounding phrases).

Synonyms Child

Launching in 2015, RankBrain was Google’s first deep studying system. Nicely, that I do know of anyway. It was designed to assist the search algorithm perceive how phrases relate to ideas.

This was type of the peak search period. Anybody may begin an internet site about something. Get it up and rating. Make a load of cash. Not want any type of rigor.

Halcyon days.

With hindsight, nowadays weren’t nice for the wider public. Getting recommendation on funeral planning and business waste administration from a spotty 23-year-old’s bed room in Halifax.

As new and evolving queries surged, RankBrain and the subsequent neural matching have been important.

Then there was MUM. Google’s capability to “perceive” textual content, pictures and visible content material throughout a number of languages simultenously.

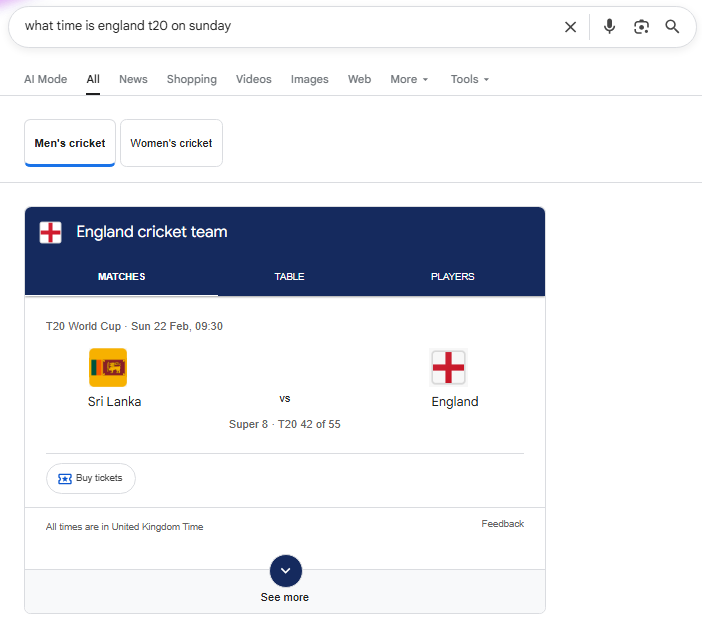

Doc size was an apparent drawback 10 years in the past. Perhaps much less. Longer articles, for higher or worse, at all times did higher. I keep in mind writing 10,000-word articles on some nonsense about web site builders and sticking them on a homepage.

Even then that was a garbage thought…

In a world the place queries and paperwork are mapped to numbers, you possibly can be forgiven for pondering that longer paperwork will at all times be surfaced over shorter ones.

Keep in mind 10-15 years in the past when everybody was obsessed when each article being 2,000 phrases.

“That’s the optimum size for Search engine optimization.”

For those who see one other “What time is X” 2,000-word article, you’ve got my permission to shoot me.

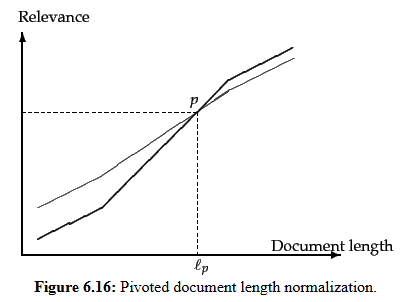

Longer paperwork will – on account of containing extra phrases – have greater TF values. In addition they include extra distinct phrases. These components can conspire to elevate the scores of longer paperwork

Therefore why, for some time, they have been the zenith of our crappy content material manufacturing.

Longer paperwork can broadly be lumped into two classes:

- Verbose paperwork that basically repeat the similar content material (whats up, key phrase stuffing, my previous buddy).

- Paperwork masking a number of subjects, wherein the search phrases most likely match small segments of the doc, however not all of it.

To fight this apparent situation, a type of compensation for doc size is used, referred to as Pivoted Document Length Normalization. This adjusts scores to counteract the pure bias longer paperwork have.

The cosine distance needs to be used as a result of we do not need to favour longer (or shorter) paperwork, however to focus on relevance. Leveraging this normalization prioritizes relevance over time period frequency.

It’s why cosine similarity is so priceless. It is strong to doc size. A brief and lengthy reply could be seen as topically an identical in the event that they level in the similar path in the vector house.

Nice query.

Nicely, nobody’s anticipating you to perceive the intricacies of a vector database. You don’t really want to know that databases create specialised indices to discover shut neighbors with out checking each single document.

This is only for corporations like Google to strike the proper stability between efficiency, value, and operational simplicity.

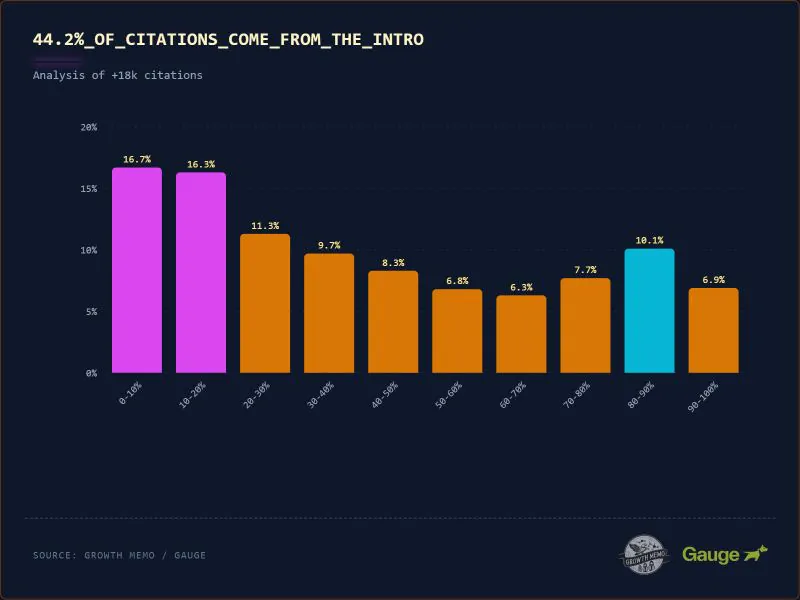

Kevin Indig’s latest excellent research exhibits that 44.2% of all citations in ChatGPT originate from the first 30% of the textual content. The chance of quotation drops considerably after this preliminary part, making a “ski ramp” impact.

Much more motive not to mindlessly create large paperwork as a result of somebody instructed you to.

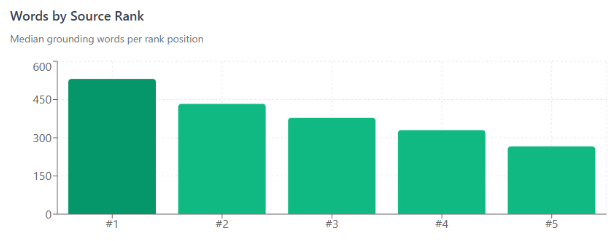

In “AI search,” loads of this comes down to tokens. In accordance to Dan Petrovic’s at all times wonderful work, every question has a fixed grounding budget of approximately 2,000 words complete, distributed throughout sources by relevance rank.

In Google, no less than. And your rank determines your rating. So get Search engine optimization-ing.

Metehan’s research on what 200,000 Tokens Reveal About AEO/GEO actually highlights how essential this is. Or will likely be. Not only for our jobs, however biases and cultural implications.

As textual content is tokenized (compressed and transformed right into a sequence of integer IDs), this has value and accuracy implications.

- Plain English prose is the most token-efficient format at 5.9 characters per token. Let’s name it 100% relative effectivity. A baseline.

- Turkish prose has simply 3.6. This is 61% as environment friendly.

- Markdown tables 2.7. 46% as environment friendly.

Languages are not created equal. In an period the place capital expenditures (CapEx) costs are soaring, and AI firms have struck deals I’m not certain they’ll money, this issues.

Nicely, as Google has been doing this for a while, the similar issues ought to work throughout each interfaces.

- Reply the flipping query. My god. Get to the level. I don’t care about something aside from what I would like. Give it to me instantly (spoken as a human and a machine).

- So frontload your essential information. I’ve no consideration span. Neither do transformer fashions.

- Disambiguate. Entity optimization work. Join the dots on-line. Declare your data panel. Authors, social accounts, structured knowledge, constructing manufacturers and profiles.

- Excellent E-E-A-T. Ship reliable information in a fashion that units you aside from the competitors.

- Create keyword-rich inner hyperlinks that assist outline what the web page and content material are about. Half disambiguation. Half simply good UX.

- In order for you one thing targeted on LLMs, be extra environment friendly together with your phrases.

- Utilizing structured lists can scale back token consumption by 20-40% as a result of they take away fluff. Not as a result of they’re extra environment friendly*.

- Use generally identified abbreviations to additionally save tokens.

*Curiously, they are much less environment friendly than conventional prose.

Virtually all of this is about giving individuals what they need rapidly and eradicating any ambiguity. In an web stuffed with crap, doing this actually, actually works.

Final Bits

There is some dialogue round whether or not markdown for agents may also help strip out the fluff from HTML on your web site. So brokers may bypass the cluttered HTML and get straight to the great things.

How a lot of this may very well be solved by having a much less fucked up method to semantic HTML, I don’t know. Anyway, one to watch.

Very Search engine optimization. A lot AI.

Extra Assets:

Learn Management in Search engine optimization. Subscribe now.

Featured Picture: Anton Vierietin/Shutterstock

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.