Researchers from Anthropic investigated Claude 3.5 Haiku’s capacity to determine when to break a line of textual content inside a hard and fast width, a activity that requires the mannequin to observe its place because it writes. The examine yielded the shocking outcome that language fashions type inside patterns resembling the spatial consciousness that people use to observe location in bodily area.

Andreas Volpini tweeted about this paper and made an analogy to chunking content material for AI consumption. In a broader sense, his remark works as a metaphor for a way each writers and fashions navigate construction, discovering coherence at the boundaries the place one section ends and one other begins.

This analysis paper, nonetheless, is not about studying content material however about producing textual content and figuring out the place to insert a line break so as to match the textual content into an arbitrary mounted width. The aim of doing that was to higher perceive what’s going on inside an LLM because it retains observe of textual content place, phrase alternative, and line break boundaries whereas writing.

The researchers created an experimental activity of producing textual content with a line break at a selected width. The aim was to perceive how Claude 3.5 Haiku decides on phrases to match inside a specified width and when to insert a line break, which required the mannequin to observe the present place inside the line of textual content it is producing.

The experiment demonstrates how language fashions be taught construction from patterns in textual content with out express programming or supervision.

The Linebreaking Problem

The linebreaking activity requires the mannequin to determine whether or not the subsequent phrase will match on the present line or if it should begin a brand new one. To succeed, the mannequin should be taught the line width constraint (the rule that limits what number of characters can match on a line, like in bodily area on a sheet of paper). To do that the LLM should observe the variety of characters written, compute what number of stay, and determine whether or not the subsequent phrase suits. The duty calls for reasoning, reminiscence, and planning. The researchers used attribution graphs to visualize how the mannequin coordinates these calculations, displaying distinct inside options for the character rely, the subsequent phrase, and the second a line break is required.

Steady Counting

The researchers noticed that Claude 3.5 Haiku represents line character counts not as counting step-by-step, however as a easy geometric construction that behaves like a repeatedly curved floor, permitting the mannequin to observe place fluidly (on the fly) relatively than counting image by image.

One thing else that’s attention-grabbing is that they found the LLM had developed a boundary head (an “consideration head”) that is chargeable for detecting the line boundary. An consideration mechanism weighs the significance of what is being thought of (tokens). An consideration head is a specialised part of the consideration mechanism of an LLM. The boundary head, which is an consideration head, focuses on the slim activity of detecting the finish of line boundary.

The analysis paper states:

“One important characteristic of the illustration of line character counts is that the “boundary head” twists the illustration, enabling every rely to pair with a rely barely bigger, indicating that the boundary is shut. That is, there is a linear map QK which slides the character rely curve alongside itself. Such an motion is not admitted by generic high-curvature embeddings of the circle or the interval like the ones in the bodily mannequin we constructed. But it surely is current in each the manifold we observe in Haiku and, as we now present, in the Fourier building. “

How Boundary Sensing Works

The researchers discovered that Claude 3.5 Haiku is aware of when a line of textual content is virtually reaching the finish by evaluating two inside alerts:

- What number of characters it has already generated, and

- How lengthy the line is supposed to be.

The aforementioned boundary consideration heads determine which elements of the textual content to focus on. A few of these heads specialise in recognizing when the line is about to attain its restrict. They do that by barely rotating or lining up the two inside alerts (the character rely and the most line width) in order that once they almost match, the mannequin’s consideration shifts towards inserting a line break.

The researchers clarify:

“To detect an approaching line boundary, the mannequin should evaluate two portions: the present character rely and the line width. We discover consideration heads whose QK matrix rotates one counting manifold to align it with the different at a selected offset, creating a big inside product when the distinction of the counts falls inside a goal vary. A number of heads with completely different offsets work collectively to exactly estimate the characters remaining. “

Closing Stage

At this stage of the experiment, the mannequin has already decided how shut it is to the line’s boundary and the way lengthy the subsequent phrase will likely be. The final step is use that information.

Right here’s the way it’s defined:

“The ultimate step of the linebreak activity is to mix the estimate of the line boundary with the prediction of the subsequent phrase to decide whether or not the subsequent phrase will match on the line, or if the line must be damaged.”

The researchers discovered that sure inside options in the mannequin activate when the subsequent phrase would trigger the line to exceed its restrict, successfully serving as boundary detectors. When that occurs, the mannequin raises the probability of predicting a newline image and lowers the probability of predicting one other phrase. Different options do the reverse: they activate when the phrase nonetheless suits, reducing the probability of inserting a line break.

Collectively, these two forces, one pushing for a line break and one holding it again, steadiness out to make the determination.

Mannequin’s Can Have Visible Illusions?

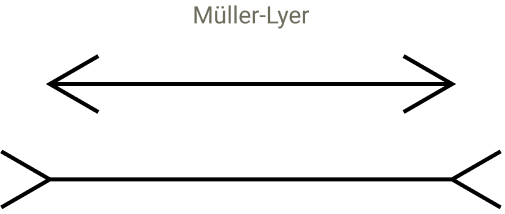

The subsequent a part of the analysis is sort of unbelievable as a result of they endeavored to take a look at whether or not the mannequin might be vulnerable to visible illusions that may trigger journey it up. They began with the thought of how people may be tricked by visible illusions that current a false perspective that make strains of the identical size seem to be completely different lengths, one shorter than the different.

Screenshot Of A Visible Phantasm

The researchers inserted synthetic tokens, similar to “@@,” to see how they disrupted the mannequin’s sense of place. These exams triggered misalignments in the mannequin’s inside patterns it makes use of to maintain observe of place, comparable to visible illusions that trick human notion. This triggered the mannequin’s sense of line boundaries to shift, displaying that its notion of construction relies upon on context and discovered patterns. Although LLMs don’t see, they expertise distortions of their inside group comparable to how people misjudge what they see by disrupting the related consideration heads.

They defined:

“We discover that it does modulate the predicted subsequent token, disrupting the newline prediction! As predicted, the related heads get distracted: whereas with the unique immediate, the heads attend from newline to newline, in the altered immediate, the heads additionally attend to the @@.”

They puzzled if there was one thing particular about the @@ characters or would some other random characters disrupt the mannequin’s capacity to efficiently full the activity. In order that they ran a take a look at with 180 completely different sequences and located that the majority of them did not disrupt the fashions capacity to predict the line break level. They found that solely a small group of characters that have been code associated have been ready to distract the related consideration heads and disrupt the counting course of.

LLMs Have Visible-Like Notion For Textual content

The examine exhibits how text-based options evolve into easy geometric techniques inside a language mannequin. It additionally exhibits that fashions don’t solely course of symbols, they create perception-based maps from them. This half, about notion, is to me what’s actually attention-grabbing about the analysis. They maintain circling again to analogies associated to human notion and the way these analogies maintain becoming into what they see going on inside the LLM.

They write:

“Though we typically describe the early layers of language fashions as chargeable for “detokenizing” the enter, it is maybe extra evocative to consider this as notion. The start of the mannequin is actually chargeable for seeing the enter, and far of the early circuitry is in service of sensing or perceiving the textual content comparable to how early layers in imaginative and prescient fashions implement low stage notion.”

Then somewhat later they write:

“The geometric and algorithmic patterns we observe have suggestive parallels to notion in organic neural techniques. …These options exhibit dilation—representing more and more massive character counts activating over more and more massive ranges—mirroring the dilation of quantity representations in organic brains. Furthermore, the group of the options on a low dimensional manifold is an occasion of a typical motif in organic cognition. Whereas the analogies are not excellent, we suspect that there is nonetheless fruitful conceptual overlap from elevated collaboration between neuroscience and interpretability.”

Implications For website positioning?

Arthur C. Clarke wrote that superior expertise is indistinguishable from magic. I feel that after you perceive a expertise it turns into extra relatable and fewer like magic. Not all information has a utilitarian use and I feel understanding how an LLM perceives content material is helpful to the extent that it’s now not magical. Will this analysis make you a greater website positioning? It deepens our understanding of how language fashions set up and interpret content material construction, makes it extra comprehensible and fewer like magic.

Examine the analysis right here:

When Models Manipulate Manifolds: The Geometry of a Counting Task

Featured Picture by Shutterstock/Krot_Studio

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.