Google Cloud is introducing what it calls its strongest synthetic intelligence infrastructure to date, unveiling a seventh-generation Tensor Processing Unit and expanded Arm-based computing options designed to meet surging demand for AI mannequin deployment — what the firm characterizes as a elementary trade shift from coaching fashions to serving them to billions of customers.

The announcement, made Thursday, facilities on Ironwood, Google's newest customized AI accelerator chip, which is able to change into usually obtainable in the coming weeks. In a placing validation of the know-how, Anthropic, the AI security firm behind the Claude household of fashions, disclosed plans to entry up to one million of these TPU chips — a dedication value tens of billions of {dollars} and amongst the largest recognized AI infrastructure offers to date.

The transfer underscores an intensifying competitors amongst cloud suppliers to management the infrastructure layer powering synthetic intelligence, at the same time as questions mount about whether or not the trade can maintain its present tempo of capital expenditure. Google's strategy — constructing customized silicon relatively than relying solely on Nvidia's dominant GPU chips — quantities to a long-term guess that vertical integration from chip design by way of software program will ship superior economics and efficiency.

Why firms are racing to serve AI fashions, not simply prepare them

Google executives framed the bulletins round what they name "the age of inference" — a transition level the place firms shift assets from coaching frontier AI fashions to deploying them in manufacturing purposes serving thousands and thousands or billions of requests every day.

"In the present day's frontier fashions, together with Google's Gemini, Veo, and Imagen and Anthropic's Claude prepare and serve on Tensor Processing Models," stated Amin Vahdat, vice chairman and common supervisor of AI and Infrastructure at Google Cloud. "For a lot of organizations, the focus is shifting from coaching these fashions to powering helpful, responsive interactions with them."

This transition has profound implications for infrastructure necessities. The place coaching workloads can typically tolerate batch processing and longer completion instances, inference — the course of of truly operating a skilled mannequin to generate responses — calls for constantly low latency, excessive throughput, and unwavering reliability. A chatbot that takes 30 seconds to reply, or a coding assistant that regularly instances out, turns into unusable no matter the underlying mannequin's capabilities.

Agentic workflows — the place AI methods take autonomous actions relatively than merely responding to prompts — create notably complicated infrastructure challenges, requiring tight coordination between specialised AI accelerators and general-purpose computing.

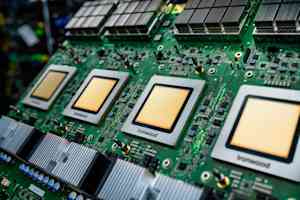

Inside Ironwood's structure: 9,216 chips working as one supercomputer

Ironwood is greater than incremental enchancment over Google's sixth-generation TPUs. In accordance to technical specs shared by the firm, it delivers greater than 4 instances higher efficiency for each coaching and inference workloads in contrast to its predecessor — positive aspects that Google attributes to a system-level co-design strategy relatively than merely rising transistor counts.

The structure's most placing characteristic is its scale. A single Ironwood "pod" — a tightly built-in unit of TPU chips functioning as one supercomputer — can join up to 9,216 particular person chips by way of Google's proprietary Inter-Chip Interconnect network working at 9.6 terabits per second. To place that bandwidth in perspective, it's roughly equal to downloading the total Library of Congress in underneath two seconds.

This large interconnect cloth permits the 9,216 chips to share entry to 1.77 petabytes of High Bandwidth Memory — reminiscence quick sufficient to hold tempo with the chips' processing speeds. That's roughly 40,000 high-definition Blu-ray films' value of working reminiscence, immediately accessible by 1000’s of processors concurrently. "For context, meaning Ironwood Pods can ship 118x extra FP8 ExaFLOPS versus the subsequent closest competitor," Google said in technical documentation.

The system employs Optical Circuit Switching know-how that acts as a "dynamic, reconfigurable cloth." When particular person elements fail or require upkeep — inevitable at this scale — the OCS know-how routinely reroutes information visitors round the interruption inside milliseconds, permitting workloads to proceed operating with out user-visible disruption.

This reliability focus displays classes discovered from deploying 5 earlier TPU generations. Google reported that its fleet-wide uptime for liquid-cooled methods has maintained roughly 99.999% availability since 2020 — equal to lower than six minutes of downtime per 12 months.

Anthropic's billion-dollar guess validates Google's customized silicon technique

Maybe the most vital external validation of Ironwood's capabilities comes from Anthropic's commitment to access up to one million TPU chips — a staggering determine in an trade the place even clusters of 10,000 to 50,000 accelerators are thought of large.

"Anthropic and Google have a longstanding partnership and this newest enlargement will assist us proceed to develop the compute we want to outline the frontier of AI," stated Krishna Rao, Anthropic's chief monetary officer, in the official partnership settlement. "Our prospects — from Fortune 500 firms to AI-native startups — rely on Claude for his or her most essential work, and this expanded capability ensures we are able to meet our exponentially rising demand."

In accordance to a separate assertion, Anthropic could have entry to "effectively over a gigawatt of capability coming on-line in 2026" — sufficient electrical energy to energy a small metropolis. The corporate particularly cited TPUs' "price-performance and effectivity" as key components in the choice, together with "present expertise in coaching and serving its fashions with TPUs."

Trade analysts estimate {that a} dedication to entry a million TPU chips, with related infrastructure, networking, energy, and cooling, possible represents a multi-year contract worth tens of billions of dollars — amongst the largest recognized cloud infrastructure commitments in historical past.

James Bradbury, Anthropic's head of compute, elaborated on the inference focus: "Ironwood's enhancements in each inference efficiency and coaching scalability will assist us scale effectively whereas sustaining the velocity and reliability our prospects count on."

Google's Axion processors goal the computing workloads that make AI attainable

Alongside Ironwood, Google launched expanded choices for its Axion processor family — customized Arm-based CPUs designed for general-purpose workloads that help AI purposes however don't require specialised accelerators.

The N4A instance type, now coming into preview, targets what Google describes as "microservices, containerized purposes, open-source databases, batch, information analytics, growth environments, experimentation, information preparation and net serving jobs that make AI purposes attainable." The corporate claims N4A delivers up to 2X higher price-performance than comparable current-generation x86-based digital machines.

Google is additionally previewing C4A metal, its first bare-metal Arm occasion, which gives devoted bodily servers for specialised workloads comparable to Android growth, automotive methods, and software program with strict licensing necessities.

The Axion technique displays a rising conviction that the way forward for computing infrastructure requires each specialised AI accelerators and extremely environment friendly general-purpose processors. Whereas a TPU handles the computationally intensive job of operating an AI mannequin, Axion-class processors handle information ingestion, preprocessing, software logic, API serving, and numerous different duties in a contemporary AI software stack.

Early buyer outcomes recommend the strategy delivers measurable financial advantages. Vimeo reported observing "a 30% enchancment in efficiency for our core transcoding workload in contrast to comparable x86 VMs" in preliminary N4A checks. ZoomInfo measured "a 60% enchancment in price-performance" for information processing pipelines operating on Java companies, in accordance to Sergei Koren, the firm's chief infrastructure architect.

Software program instruments flip uncooked silicon efficiency into developer productiveness

{Hardware} efficiency means little if builders can not simply harness it. Google emphasised that Ironwood and Axion are built-in into what it calls AI Hypercomputer — "an built-in supercomputing system that brings collectively compute, networking, storage, and software program to enhance system-level efficiency and effectivity."

In accordance to an October 2025 IDC Enterprise Worth Snapshot research, AI Hypercomputer prospects achieved on common 353% three-year return on funding, 28% decrease IT prices, and 55% extra environment friendly IT groups.

Google disclosed a number of software program enhancements designed to maximize Ironwood utilization. Google Kubernetes Engine now affords superior upkeep and topology consciousness for TPU clusters, enabling clever scheduling and extremely resilient deployments. The corporate's open-source MaxText framework now helps superior coaching methods together with Supervised Effective-Tuning and Generative Reinforcement Coverage Optimization.

Maybe most vital for manufacturing deployments, Google's Inference Gateway intelligently load-balances requests throughout mannequin servers to optimize vital metrics. In accordance to Google, it will probably scale back time-to-first-token latency by 96% and serving prices by up to 30% by way of methods like prefix-cache-aware routing.

The Inference Gateway displays key metrics together with KV cache hits, GPU or TPU utilization, and request queue size, then routes incoming requests to the optimum duplicate. For conversational AI purposes the place a number of requests may share context, routing requests with shared prefixes to the identical server occasion can dramatically scale back redundant computation.

The hidden problem: powering and cooling one-megawatt server racks

Behind these bulletins lies a large bodily infrastructure problem that Google addressed at the latest Open Compute Project EMEA Summit. The corporate disclosed that it's implementing +/-400 volt direct present energy supply able to supporting up to one megawatt per rack — a tenfold enhance from typical deployments.

"The AI period requires even larger energy supply capabilities," defined Madhusudan Iyengar and Amber Huffman, Google principal engineers, in an April 2025 blog post. "ML would require greater than 500 kW per IT rack before 2030."

Google is collaborating with Meta and Microsoft to standardize electrical and mechanical interfaces for high-voltage DC distribution. The corporate chosen 400 VDC particularly to leverage the provide chain established by electrical autos, "for larger economies of scale, extra environment friendly manufacturing, and improved high quality and scale."

On cooling, Google revealed it should contribute its fifth-generation cooling distribution unit design to the Open Compute Mission. The corporate has deployed liquid cooling "at GigaWatt scale throughout greater than 2,000 TPU Pods in the previous seven years" with fleet-wide availability of roughly 99.999%.

Water can transport roughly 4,000 instances extra warmth per unit quantity than air for a given temperature change — vital as particular person AI accelerator chips more and more dissipate 1,000 watts or extra.

Customized silicon gambit challenges Nvidia's AI accelerator dominance

Google's bulletins come as the AI infrastructure market reaches an inflection level. Whereas Nvidia maintains overwhelming dominance in AI accelerators — holding an estimated 80-95% market share — cloud suppliers are more and more investing in customized silicon to differentiate their choices and enhance unit economics.

Amazon Internet Providers pioneered this strategy with Graviton Arm-based CPUs and Inferentia / Trainium AI chips. Microsoft has developed Cobalt processors and is reportedly working on AI accelerators. Google now affords the most complete customized silicon portfolio amongst main cloud suppliers.

The technique faces inherent challenges. Customized chip growth requires huge upfront funding — typically billions of {dollars}. The software program ecosystem for specialised accelerators lags behind Nvidia's CUDA platform, which advantages from 15+ years of developer instruments. And fast AI mannequin structure evolution creates danger that customized silicon optimized for at present's fashions turns into much less related as new methods emerge.

But Google argues its strategy delivers distinctive benefits. "This is how we constructed the first TPU ten years in the past, which in flip unlocked the invention of the Transformer eight years in the past — the very structure that powers most of contemporary AI," the firm famous, referring to the seminal "Attention Is All You Need" paper from Google researchers in 2017.

The argument is that tight integration — "mannequin analysis, software program, and {hardware} growth underneath one roof" — permits optimizations inconceivable with off-the-shelf elements.

Past Anthropic, a number of different prospects offered early suggestions. Lightricks, which develops artistic AI instruments, reported that early Ironwood testing "makes us extremely enthusiastic" about creating "extra nuanced, exact, and higher-fidelity picture and video era for our thousands and thousands of world prospects," stated Yoav HaCohen, the firm's analysis director.

Google's bulletins increase questions that can play out over coming quarters. Can the trade maintain present infrastructure spending, with main AI firms collectively committing tons of of billions of {dollars}? Will customized silicon show economically superior to Nvidia GPUs? How will mannequin architectures evolve?

For now, Google seems dedicated to a technique that has outlined the firm for many years: constructing customized infrastructure to allow purposes inconceivable on commodity {hardware}, then making that infrastructure obtainable to prospects who need comparable capabilities with out the capital funding.

As the AI trade transitions from analysis labs to manufacturing deployments serving billions of customers, that infrastructure layer — the silicon, software program, networking, energy, and cooling that make all of it run — could show as essential as the fashions themselves.

And if Anthropic's willingness to commit to accessing up to a million chips is any indication, Google's guess on customized silicon designed particularly for the age of inference could also be paying off simply as demand reaches its inflection level.

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.