OpenAI, Google, and Anthropic introduced specialised medical AI capabilities inside days of one another this month, a clustering that means aggressive strain slightly than coincidental timing. But none of the releases are cleared as medical gadgets, permitted for medical use, or out there for direct affected person prognosis—regardless of advertising language emphasising healthcare transformation.

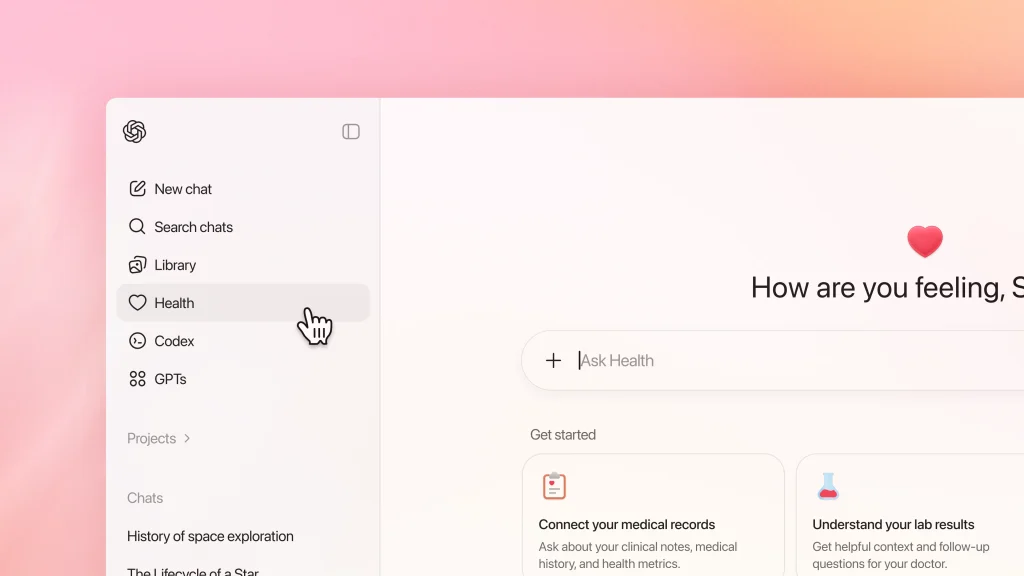

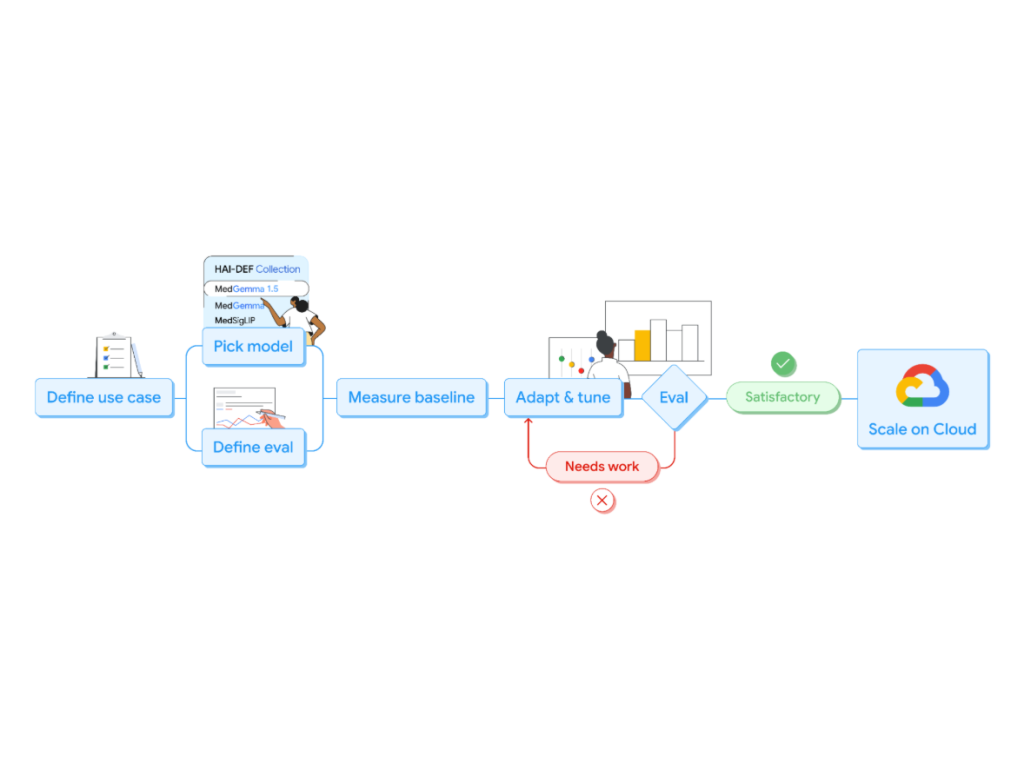

OpenAI introduced ChatGPT Well being on January 7, permitting US customers to join medical information by way of partnerships with b.properly, Apple Well being, Perform, and MyFitnessPal. Google released MedGemma 1.5 on January 13, increasing its open medical AI mannequin to interpret three-dimensional CT and MRI scans alongside whole-slide histopathology photographs.

Anthropic followed on January 11 with Claude for Healthcare, providing HIPAA-compliant connectors to CMS protection databases, ICD-10 coding methods, and the Nationwide Supplier Identifier Registry.

All three corporations are focusing on the similar workflow ache factors—prior authorisation critiques, claims processing, medical documentation—with related technical approaches however completely different go-to-market methods.

Developer platforms, not diagnostic merchandise

The architectural similarities are notable. Every system makes use of multimodal massive language fashions fine-tuned on medical literature and medical datasets. Every emphasises privateness protections and regulatory disclaimers. Every positions itself as supporting slightly than changing medical judgment.

The variations lie in deployment and entry fashions. OpenAI’s ChatGPT Well being operates as a consumer-facing service with a waitlist for ChatGPT Free, Plus, and Professional subscribers exterior the EEA, Switzerland, and the UK. Google’s MedGemma 1.5 releases as an open mannequin by way of its Well being AI Developer Foundations program, out there for obtain by way of Hugging Face or deployment by way of Google Cloud’s Vertex AI.

Anthropic’s Claude for Healthcare integrates into present enterprise workflows by way of Claude for Enterprise, focusing on institutional patrons slightly than particular person shoppers. The regulatory positioning is constant throughout all three.

OpenAI states explicitly that Well being “is not supposed for prognosis or therapy.” Google positions MedGemma as “beginning factors for builders to consider and adapt to their medical use instances.” Anthropic emphasises that outputs “are not supposed to immediately inform medical prognosis, affected person administration choices, therapy suggestions, or another direct medical follow purposes.”

Benchmark efficiency vs medical validation

Medical AI benchmark outcomes improved considerably throughout all three releases, although the hole between take a look at efficiency and medical deployment stays vital. Google reviews that MedGemma 1.5 achieved 92.3% accuracy on MedAgentBench, Stanford’s medical agent process completion benchmark, in contrast to 69.6% for the earlier Sonnet 3.5 baseline.

The mannequin improved by 14 share factors on MRI illness classification and three share factors on CT findings in inside testing. Anthropic’s Claude Opus 4.5 scored 61.3% on MedCalc medical calculation accuracy exams with Python code execution enabled, and 92.3% on MedAgentBench.

The corporate additionally claims enhancements in “honesty evaluations” associated to factual hallucinations, although particular metrics have been not disclosed.

OpenAI has not revealed benchmark comparisons for ChatGPT Well being particularly, noting as a substitute that “over 230 million folks globally ask well being and wellness-related questions on ChatGPT each week” primarily based on de-identified evaluation of present utilization patterns.

These benchmarks measure efficiency on curated take a look at datasets, not medical outcomes in follow. Medical errors can have life-threatening penalties, translating benchmark accuracy to medical utility extra complicated than in different AI software domains.

Regulatory pathway stays unclear

The regulatory framework for these medical AI instruments stays ambiguous. In the US, the FDA’s oversight relies upon on supposed use. Software program that “helps or gives suggestions to a well being care skilled about prevention, prognosis, or therapy of a illness” could require premarket evaluation as a medical machine. None of the introduced instruments has FDA clearance.

Legal responsibility questions are equally unresolved. When Banner Well being’s CTO Mike Reagin states that the well being system was “drawn to Anthropic’s focus on AI security,” this addresses know-how choice standards, not authorized legal responsibility frameworks.

If a clinician depends on Claude’s prior authorisation evaluation and a affected person suffers hurt from delayed care, present case legislation gives restricted steerage on accountability allocation.

Regulatory approaches range considerably throughout markets. Whereas the FDA and Europe’s Medical Gadget Regulation present established frameworks for software program as a medical machine, many APAC regulators have not issued particular steerage on generative AI diagnostic instruments.

This regulatory ambiguity impacts adoption timelines in markets the place healthcare infrastructure gaps would possibly in any other case speed up implementation—making a stress between medical want and regulatory warning.

Administrative workflows, not medical choices

Actual deployments stay rigorously scoped. Novo Nordisk’s Louise Lind Skov, Director of Content material Digitalisation, described utilizing Claude for “doc and content material automation in pharma growth,” centered on regulatory submission paperwork slightly than affected person prognosis.

Taiwan’s Nationwide Well being Insurance coverage Administration utilized MedGemma to extract knowledge from 30,000 pathology reviews for coverage evaluation, not therapy choices.

The sample suggests institutional adoption is concentrating on administrative workflows the place errors are much less instantly harmful—billing, documentation, protocol drafting—slightly than direct medical determination assist the place medical AI capabilities would have the most dramatic affect on affected person outcomes.

Medical AI capabilities are advancing quicker than the establishments deploying them can navigate regulatory, legal responsibility, and workflow integration complexities. The know-how exists. The US$20 month-to-month subscription gives entry to subtle medical reasoning instruments.

Whether or not that interprets to reworked healthcare supply relies upon on questions these coordinated bulletins depart unaddressed.

See additionally: AstraZeneca bets on in-house AI to speed up oncology research

Need to study extra about AI and large knowledge from trade leaders? Try AI & Big Data Expo going down in Amsterdam, California, and London. The excellent occasion is a part of TechEx and is co-located with different main know-how occasions. Click on here for extra information.

AI Information is powered by TechForge Media. Discover different upcoming enterprise know-how occasions and webinars here.

Disclaimer: This article is sourced from external platforms. OverBeta has not independently verified the information. Readers are advised to verify details before relying on them.